When you ask ChatGPT a question, use Google Translate, or watch a video with auto-subtitles, you are seeing Transformers and Attention Mechanisms at work. These ideas changed Artificial Intelligence by helping machines understand not just single words, but also the meaning of entire sentences.

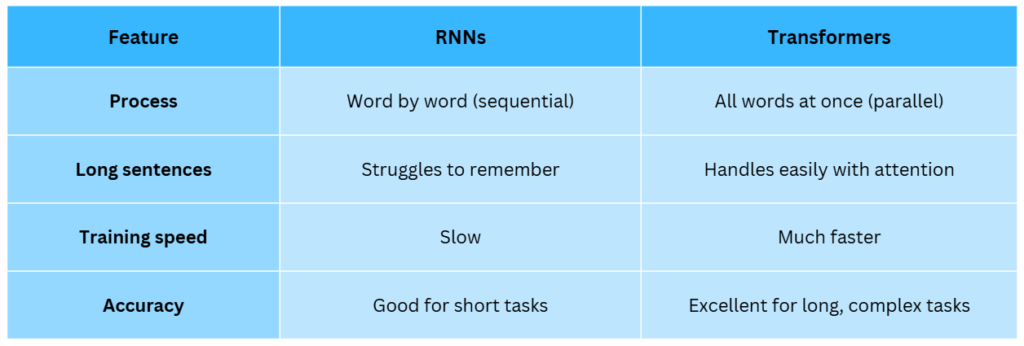

Before Transformers, models like RNNs and LSTMs often struggled with long texts and were slow to train. Transformers solved these problems by looking at all the words in a sentence at the same time and deciding which ones matter most using attention.

In this article, we will learn what Transformers are, why attention is powerful, how they handle long sentences, how they compare with older models like RNNs, and how modern tools like ChatGPT use them every day.

What Are Transformers?

Transformers are a special type of deep learning model that changed how machines understand language. They were first introduced in a research paper called Attention is All You Need in 2017, and since then they have become the foundation of almost every advanced AI system.

Unlike older models that read sentences word by word (like Recurrent Neural Networks), Transformers look at the whole sentence at once. This makes them much faster and better at finding meaning in long pieces of text.

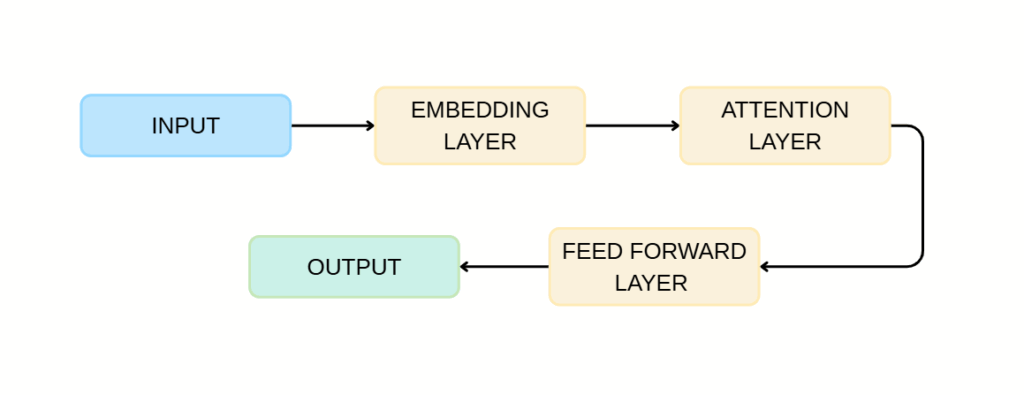

A Transformer works in two main steps:

- Embedding – Words are turned into numbers so the computer can work with them.

- Attention – The model figures out which words in the sentence are most important for understanding the meaning.

For example, in the sentence:

The dog chased the ball because it was rolling fast.

The word “it” could mean either dog or ball. A Transformer uses attention to correctly link “it” to “ball” by looking at the entire sentence together.

This architecture allows Transformers to be used not only in language tasks but also in images, speech, and even biology (like predicting protein structures).

Why Attention Is Powerful

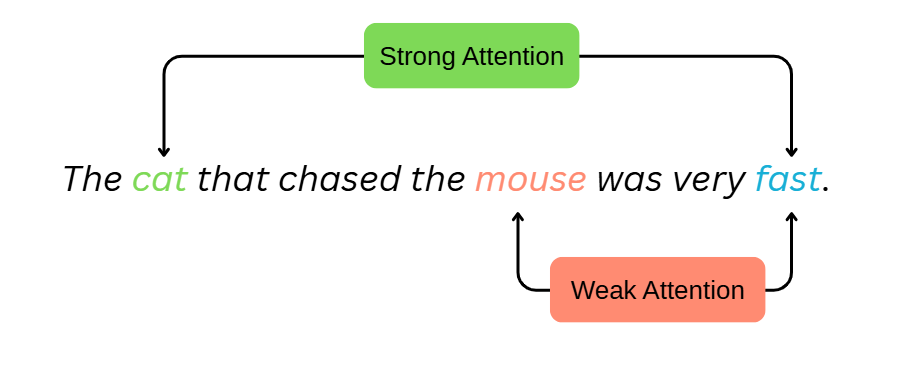

Imagine reading this sentence:

The cat that chased the mouse was very fast.

To understand that “cat” is the one that was “fast”, your brain keeps track of connections across the sentence. That is what attention does in AI: it helps the model focus on the most relevant words when making predictions.

Instead of treating all words equally, the model learns to assign different attention weights. For example, in a translation task, the word “cat” in English might strongly connect to “gato” in Spanish, while ignoring unrelated words like “mouse”.

This mechanism makes the network much better at capturing context.

How Attention Helps in Long Sentences

One of the biggest problems in older RNNs was that they forgot important details in long sentences. For example:

In 1997, Deep Blue defeated Garry Kasparov in chess, which was the first time a computer had beaten a world champion.

An RNN might lose track of “Deep Blue” by the time it reaches “world champion”. But Transformers with attention can link “Deep Blue” to “world champion” directly, no matter how far apart they are in the sentence.

This ability to look at the entire sentence at once is why Transformers became the backbone of translation systems, chatbots, and even tools like Google Search.

Transformers vs. RNNs

How ChatGPT and LLMs Use Transformers

Large Language Models (LLMs) like ChatGPT, BERT, and GPT-4 are all built on the Transformer architecture.

When you type a question, the model:

- Embed your words into numbers.

- Uses attention mechanisms to understand relationships across your sentence.

- Generates a response by predicting the most likely next words, one by one, but based on the full context.

That is why ChatGPT can answer long, detailed questions while staying relevant, it is constantly paying attention to the important words and their connections.

Did you know?

| Transformers are not just used for language! The same idea is used in computer vision to recognise objects in images, in biology to predict protein structures (like Google’s AlphaFold), and even in music AI to generate songs. |

Transformers and Attention Mechanisms have reshaped AI. They are the reason machines can now write essays, compose poetry, summarise research papers, and even code. For beginners, the key idea to remember is that attention allows models to focus on what matters most, and Transformers are the architecture that makes it all work efficiently.