What Is Ethics?

Ethics are the rules that help us tell the difference between what is right and what is wrong. These rules guide how we act, treat others, and make decisions that affect the world around us.

The word ethics comes from a Greek word ethos, which means habit, character, or behavior. Just like we have rules at school or home, ethics are like rules for life. They help people live together in a fair, kind, and respectful way. AI ethics is the application of these principles to Artificial Intelligence systems and their impact on society.

For example:

- Following classroom rules even when no one is watching is ethical.

- Watching age-appropriate videos is ethical.

- Breaking a queue or copying someone’s homework is unethical.

- Downloading pirated movies or books without paying is also unethical.

In the past, these rules mostly applied to people. But now, as technology becomes smarter, we also need to think about ethics for machines, especially for Artificial Intelligence (AI).

| Let us read each situation carefully and understand whether the action is ethical or not. 1. Cheating in an exam is not ethical. 2. Helping a classmate understand a topic is ethical. 3. Using AI or the internet to copy full answers for an assignment is not ethical. 4. Reporting a mistake honestly, even if it gets you in trouble, is ethical. 5. Making fun of someone in a group chat is not ethical. 6. Turning in a project you did completely on your own is ethical. 7. Letting your friend copy your homework is not ethical. 8. Using AI to get ideas but writing your own answers is ethical. |

What Is AI Ethics?

Artificial Intelligence is a type of computer system that can do things like solve problems, make decisions, recognize faces, translate languages, or even drive a car. But it does not have a heart or a conscience, it can not “know” what is right or wrong unless we teach it.

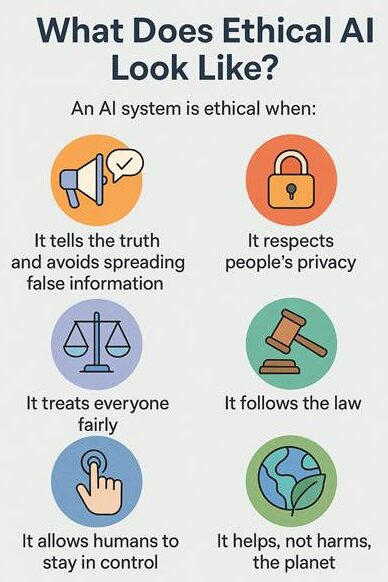

AI ethics is about making sure AI is used in a way that is safe, fair, and helpful. It means putting rules in place to make sure that AI systems:

Even though AI is built by people, it sometimes works so fast and on such a large scale that it can cause problems if not guided carefully. That is why AI needs a moral compass, just like humans.

Why Do We Need Ethical AI?

AI is not just used in computers or games anymore. It is used in banks, hospitals, classrooms, mobile phones, cars, and even government offices. This means it can affect our health, money, education, and safety.

Since AI systems are created by people, they can have mistakes or biases. For example:

- If an AI is trained with data that is unfair, it might treat some people better than others.

- If an AI is not designed properly, it might make wrong decisions without meaning to.

- Some AI can be used to spread lies (like deepfakes), steal personal information, or cause harm.

So, we need ethical AI to:

- Protect people from harm

- Make fair and equal choices

- Keep personal data safe

- Help the environment

- Build trust between humans and technology

Ethical AI is about making sure that machines help us and do not hurt us.

Key Ethical Challenges in AI

As smart and helpful as Artificial Intelligence can be, it also comes with several challenges. These are situations where AI might cause problems if we are not careful. That is why understanding the ethical challenges in AI is important, so we can avoid harm and use AI responsibly.

Accountability: Who is responsible?

People often think that AI is doing everything by itself. But behind every smart machine, there are human beings such as programmers, engineers and scientists, who design and train it.In the same way that professionals in medicine or law must follow ethical guidelines, people working in AI also have a duty to follow ethical codes. This includes thinking about both the positive and negative impacts of AI before releasing it into the real world.

That means humans are still responsible for what AI does. For example: if a self-driving car makes a mistake, who should be blamed? The company that made it? The person who owns it? The engineer who coded it?

This is called the problem of accountability, figuring out who takes responsibility when things go wrong. We need clear rules to make sure that someone is always responsible for how AI is used and what it does.

Did You Know?

Over 50 countries, including Canada, the U.K., and the EU, have signed the Council of Europe’s Framework Convention on AI, which aims to protect human rights, democracy, and privacy in AI usage.

You can read more about it here.

People who create AI must also think carefully about the effects of their work. They need to ask questions like:

- Will this harm someone?

- Will this decision be fair?

- Could this invade someone’s privacy?

- How will it affect society in the long run?

Bias in AI: Is it fair to everyone?

AI learns from data. If that data contains unfair or unbalanced information, the AI might learn the same unfairness. This is called bias. There are two main types:

- Human Bias: This comes from real-world unfairness. If people have treated some groups unfairly in the past, and that history is used to train the AI, the AI might repeat the same mistakes.

- Technical Bias: This happens when the way an AI system is designed or programmed leads to unfair results, even if no one meant for it to happen.

For example, an AI that helps hire people might select fewer girls for science jobs just because past data showed fewer girls in that field. That is unfair and must be fixed.

Security and Privacy: Is your data safe?

When you use the internet, whether it is searching for something, watching videos, playing games, or even just doing homework, your actions leave behind something called data. This data includes:

- Your name

- Your photos

- Your voice

- What you click on

- What you search

- Even your homework answers or school progress

Now imagine an AI system that helps you with your homework, recommends videos, or gives you a fun game to play. In order to work properly, it may collect some of this data to understand what you like and how you think. This is normal, but only if it is done safely and with your permission.

But what if someone takes your data without asking?

What if it is stored somewhere carelessly, and someone steals it?

Or worse, what if an AI uses that data to watch you, sell your private information, or show you things you should not be seeing?

That is why security and privacy are such big challenges in AI. An ethical AI system should:

- Ask for permission before using your data.

- Protect that data like a locked treasure chest.

- Never use your information to trick, scare, or harm you.

- Be honest about what it is collecting and why.

Did You Know?

In India, we now have a law called the Digital Personal Data Protection Act, 2023. This law helps protect your personal data, even from AI. It tells companies and app makers that they must take care of your information, get your permission, and never use it the wrong way.

Deepfakes and Misinformation: Can you trust what you see?

Deepfakes are videos, images, or audio clips created by AI to look and sound real, even though they are completely fake. For example, someone could use AI to create a video of a famous person saying something they never actually said. These deepfakes can be used to spread false news, trick people, or damage someone’s reputation.

When people cannot tell what is real and what is not, it becomes easier for lies to spread. This is called misinformation, and it can lead to confusion, fear, or even harm. While AI can be used for creativity and entertainment, using it to create or share fake content is unethical. It is important to use AI in ways that are honest and respectful, and to always think carefully before believing or sharing information online.

AI Alignment: Does AI follow human values?

Sometimes, even if AI is not harmful on purpose, it may still do things that go against what people actually want. This happens when AI is not properly aligned with human values. For example, if an AI is told to “make the fastest delivery” and it starts breaking traffic rules to do that, it is not behaving safely or sensibly.

AI must be trained to work with humans, not against them. It should support our goals, respect our values, and understand what really matters.

Artificial Intelligence is one of the most powerful tools created by humans. It can learn, decide, and even create, just like us in many ways. But unlike people, AI does not understand feelings, fairness, or what is right and wrong on its own. That is why it is so important for us to guide it using strong ethical rules.

AI ethics helps us make sure that technology is used to support people and not cause harm. It teaches us to be careful, fair, and responsible when we create or use smart machines. From protecting privacy to avoiding bias, from staying honest to keeping human control, ethical thinking should be part of every AI system we build.

As future users, leaders, and creators of technology, it is up to us to apply AI ethics by asking the right questions, making responsible choices, and ensuring that AI helps build a better and fairer world for everyone in a sustainable and thoughtful way.