Deep learning is one of the main reasons artificial intelligence has become so powerful today. It allows computers to learn directly from data, instead of being told exactly what to do step by step. This approach is what drives many of the technologies we use every day, such as voice assistants, language translation, facial recognition, and even tools that can generate art or text.

At the center of deep learning are deep learning models. These models are made up of layers of artificial neurons that try to work like the human brain. Each layer learns to recognize certain patterns in data and passes what it learns to the next layer. By stacking many layers together, the system can understand data at different levels of detail. For example, a model that looks at pictures might first learn to detect simple shapes like lines and edges, then move on to recognizing complex objects such as faces or animals.

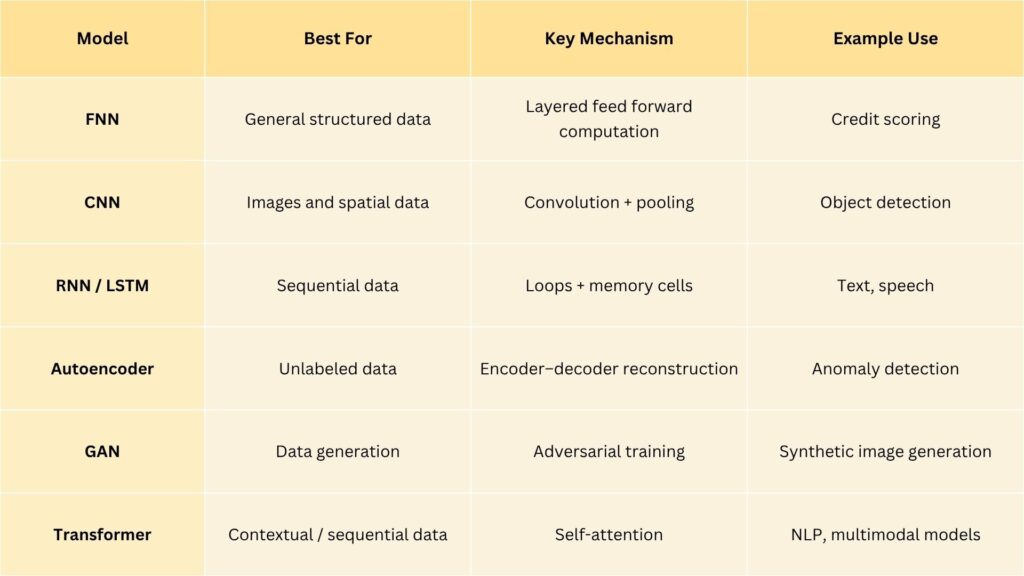

Each type of deep learning model has its own special structure and purpose. Some models are good at analyzing images, others are designed to understand sequences of words or sounds, and some can even generate completely new data that looks real. Together, these models form the foundation of modern artificial intelligence, helping machines see, listen, read, and create in ways that were once thought to be impossible.

Did you Know?

The roots of neural networks go back to 1943. Warren McCulloch and Walter Pitts created one of the first mathematical models of a neuron long before computers were powerful enough to run them.

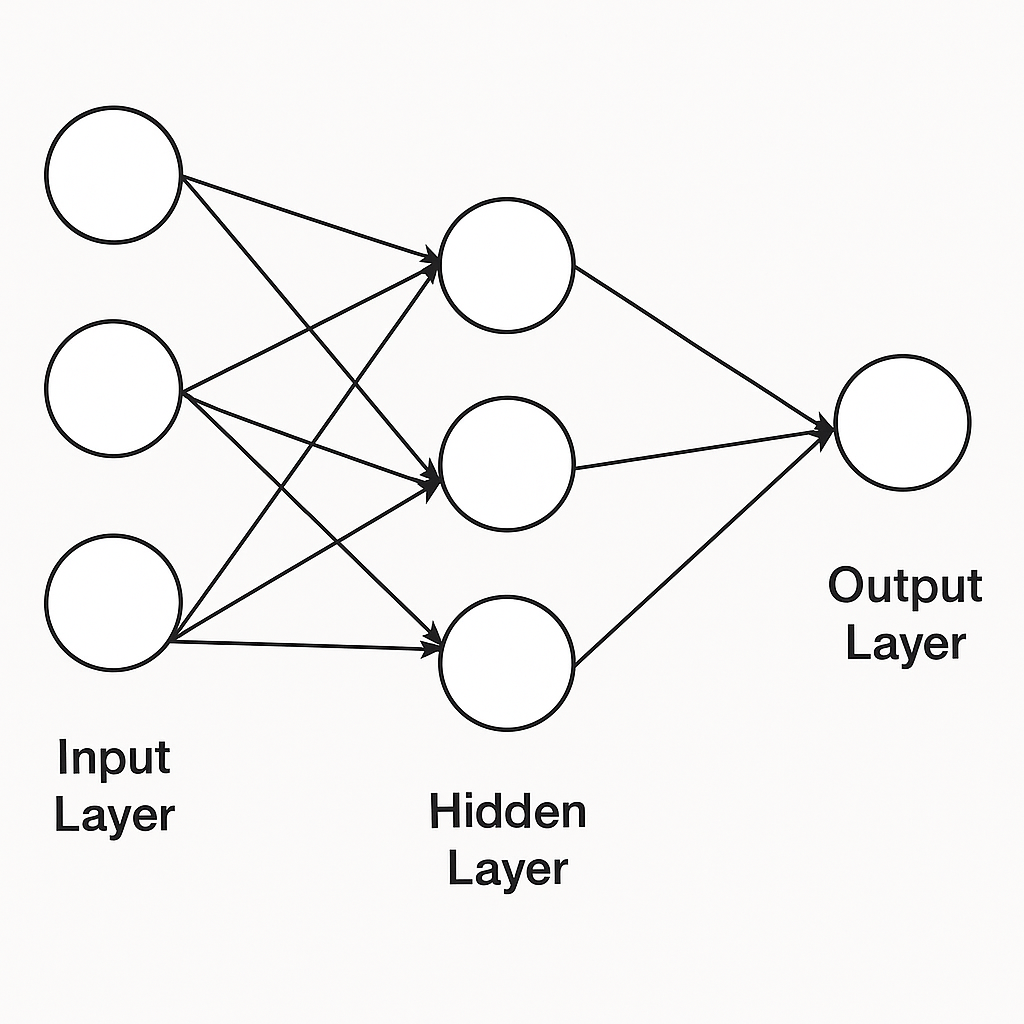

1. Feedforward Neural Networks (FNNs): The Foundation of Deep Learning

Feedforward Neural Networks are the simplest and most fundamental type of neural network. Data flows in one direction from input to output without looping back.

Each neuron multiplies its inputs by weights, adds a bias, applies an activation function (like ReLU or Sigmoid), and passes it forward. The network learns by adjusting these weights using a process called backpropagation, minimizing the difference between predicted and actual outputs.

Feedforward Neural Networks introduced the basic concept of layered learning, the foundation for all other deep architectures.

Use cases:

- Basic classification and regression

- Risk prediction or sentiment scoring

- As a building block in more complex models

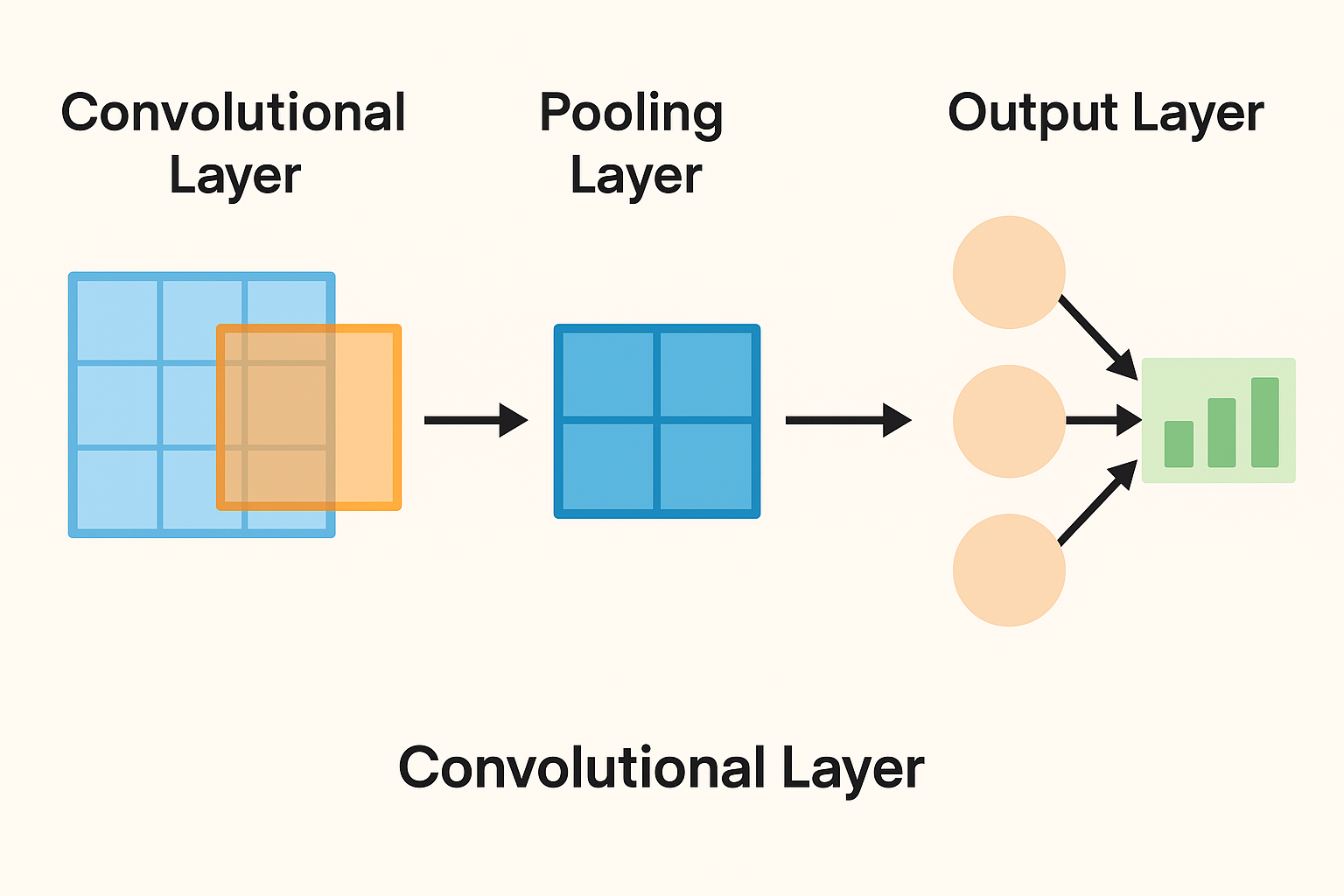

2. Convolutional Neural Networks (CNNs): Giving Machines Vision

Convolutional Neural Networks specialize in visual data, they can detect patterns, edges, and shapes in images. They use convolutional layers that slide small filters (kernels) over an image to extract spatial features.

Each layer captures different levels of abstraction:

- Early layers learn edges and textures,

- Middle layers detect shapes and patterns,

- Deeper layers identify complete objects.

Pooling layers reduce dimensionality while keeping the most important features, making the model efficient and scalable.

CNNs enable machines to interpret images almost like humans, forming the foundation of computer vision.

Use cases:

- Object detection (like detecting pedestrians in autonomous cars)

- Medical image analysis

- Facial recognition

- Video classification

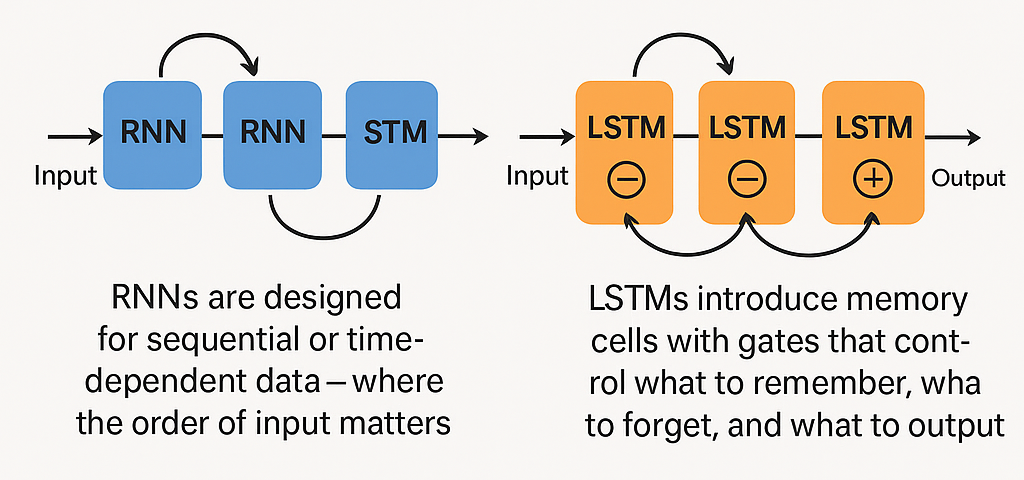

3. Recurrent Neural Networks (RNNs) and LSTMs: Learning from Sequences

Recurrent Neural Networks are designed for sequential or time-dependent data, where the order of input matters (like speech, text, or stock prices). Unlike feedforward neural networks, recurrent neural networks have feedback loops allowing information from previous steps to influence the next step giving the network a short-term memory.

However, standard recurrent neural networks struggle with long-term dependencies due to vanishing gradients. That’s where Long Short-Term Memory (LSTM) networks come in.

Long Short-Term Memory introduces memory cells with gates that control what to remember, what to forget, and what to output. This allows them to retain information over long sequences effectively.

RNNs and LSTMs enable AI to understand context and continuity which is critical for language, music, and time-series prediction.

Use cases:

- Speech recognition

- Text generation and translation

- Predicting time-based trends (like weather or sales)

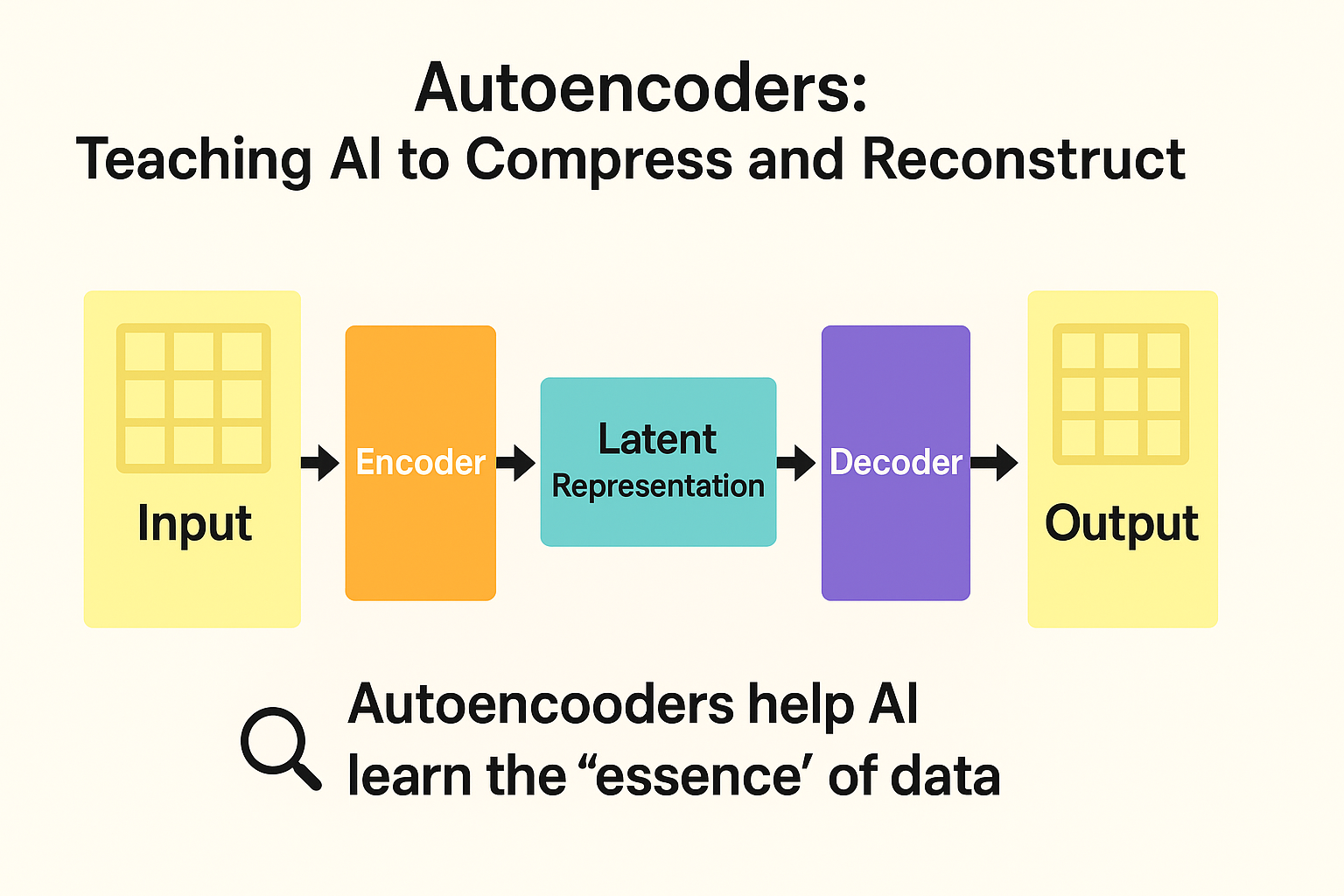

4. Autoencoders: Teaching AI to Compress and Reconstruct

Autoencoders are unsupervised learning models that learn to represent data efficiently. They consist of two main parts:

- Encoder: Compresses the input into a compact latent representation.

- Decoder: Reconstructs the original data from this compressed form.

By minimizing the difference between input and output, autoencoders learn the key features of data without needing labeled examples.

They help machines understand what information is essential, which is a powerful step toward efficient learning and anomaly detection.

Use cases:

- Image compression

- Noise reduction (denoising autoencoders)

- Anomaly detection in finance or cybersecurity

- Dimensionality reduction for visualization

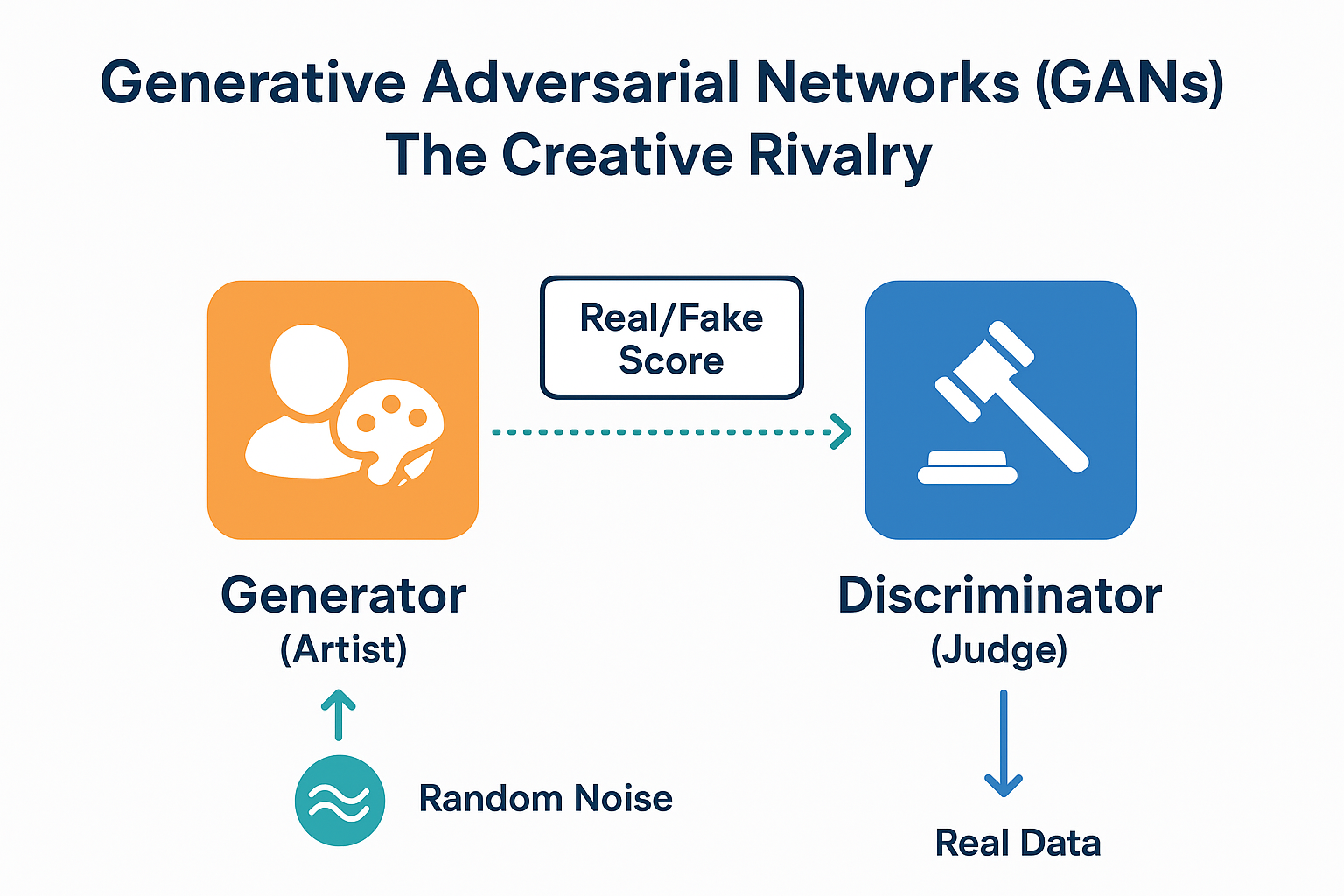

5. Generative Adversarial Networks (GANs): The Creative Rivalry

Generative Adversarial Networks consist of two neural networks that compete against each other:

- Generator: Tries to create fake data that looks real.

- Discriminator: Tries to distinguish real data from fake.

Both networks improve through this adversarial process. Over time, the generator learns to produce outputs so realistic that even the discriminator can’t tell them apart.

Generative Adversarial Networks introduced the concept of creative AI where machines can imagine and generate new content.

Use cases:

- Creating realistic images, videos, or music

- Generating synthetic data for training other AI models

- Deepfake and art generation

6. Transformers: Understanding Context at Scale

Transformers are the powerhouse models behind language models like GPT and BERT. Unlike recurrent neural networks, which process data step-by-step, transformers use self-attention which is a mechanism that lets the model focus on different parts of the input simultaneously.

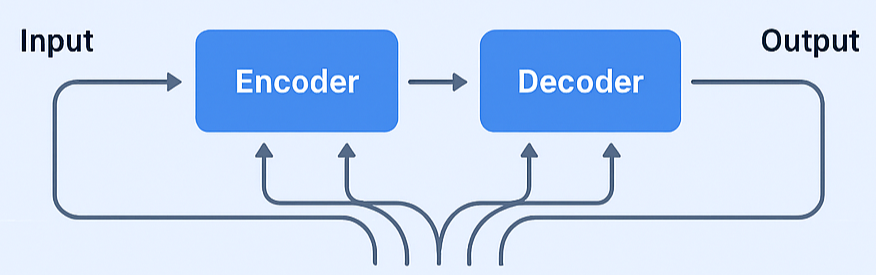

This means a transformer can understand the relationship between any two words (or elements) in a sequence, regardless of how far apart they are. They are built using encoder-decoder architectures and rely on positional encoding to maintain order in data.

Transformers revolutionized how machines understand language, context, and relationships which are enabling AI to generate text, code, and even images.

Use cases:

- Machine translation and summarization

- Chatbots and conversational AI

- Image and video understanding (Vision Transformers)