Artificial Intelligence has advanced faster than most of us imagined. With just a few words, we can now generate essays, code, images, or even conversations that feel human. But behind that power lies responsibility. Prompt engineering, the art of guiding AI with precise instructions doesn’t just shape the quality of outputs, it also determines how fair, accurate, and safe those outputs are.

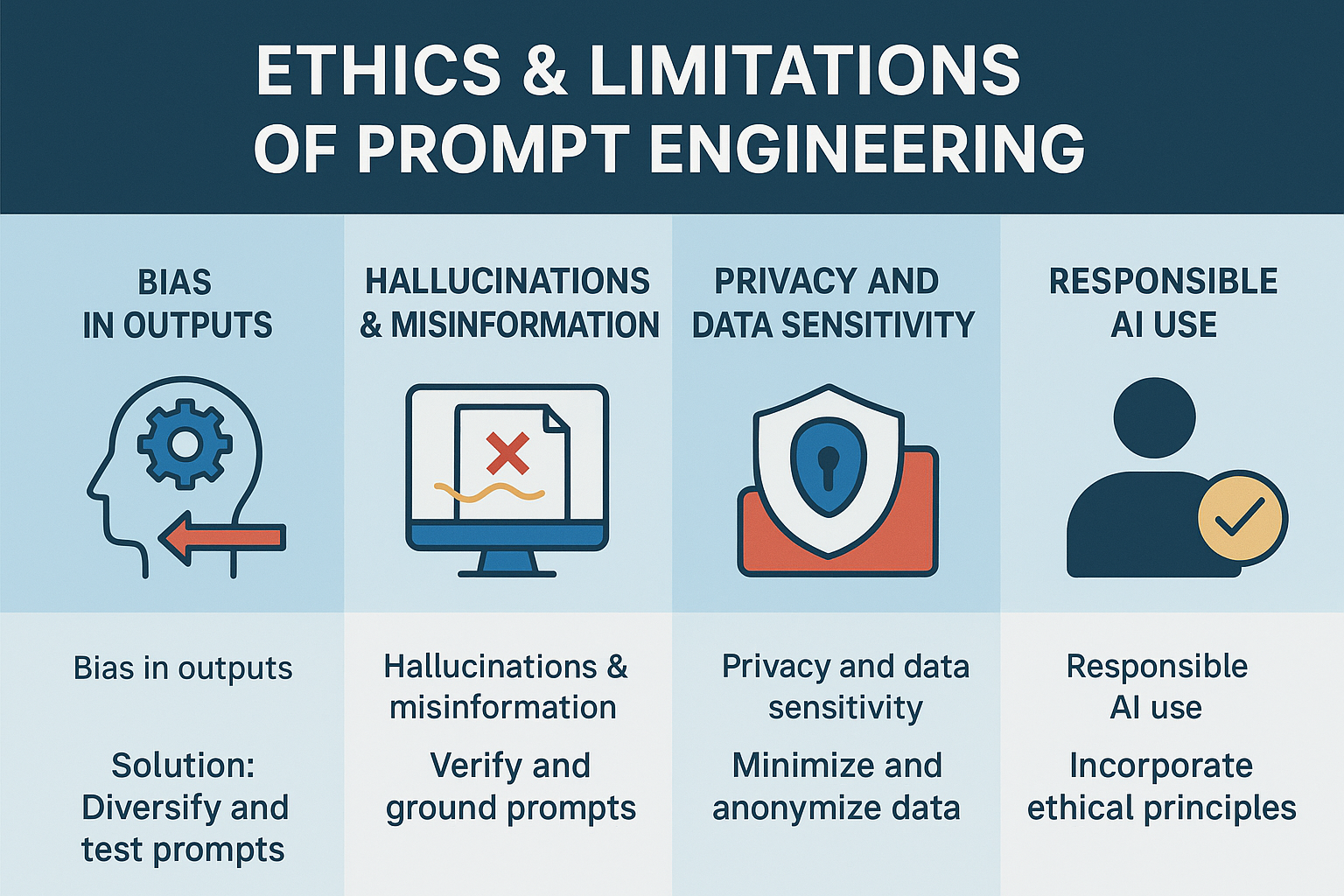

As AI becomes embedded in education, law, healthcare, and media, it’s crucial to understand the ethical boundaries that come with prompting. Four major issues demand attention: bias, hallucinations and misinformation, privacy and data sensitivity, and the broader idea of responsible AI use.

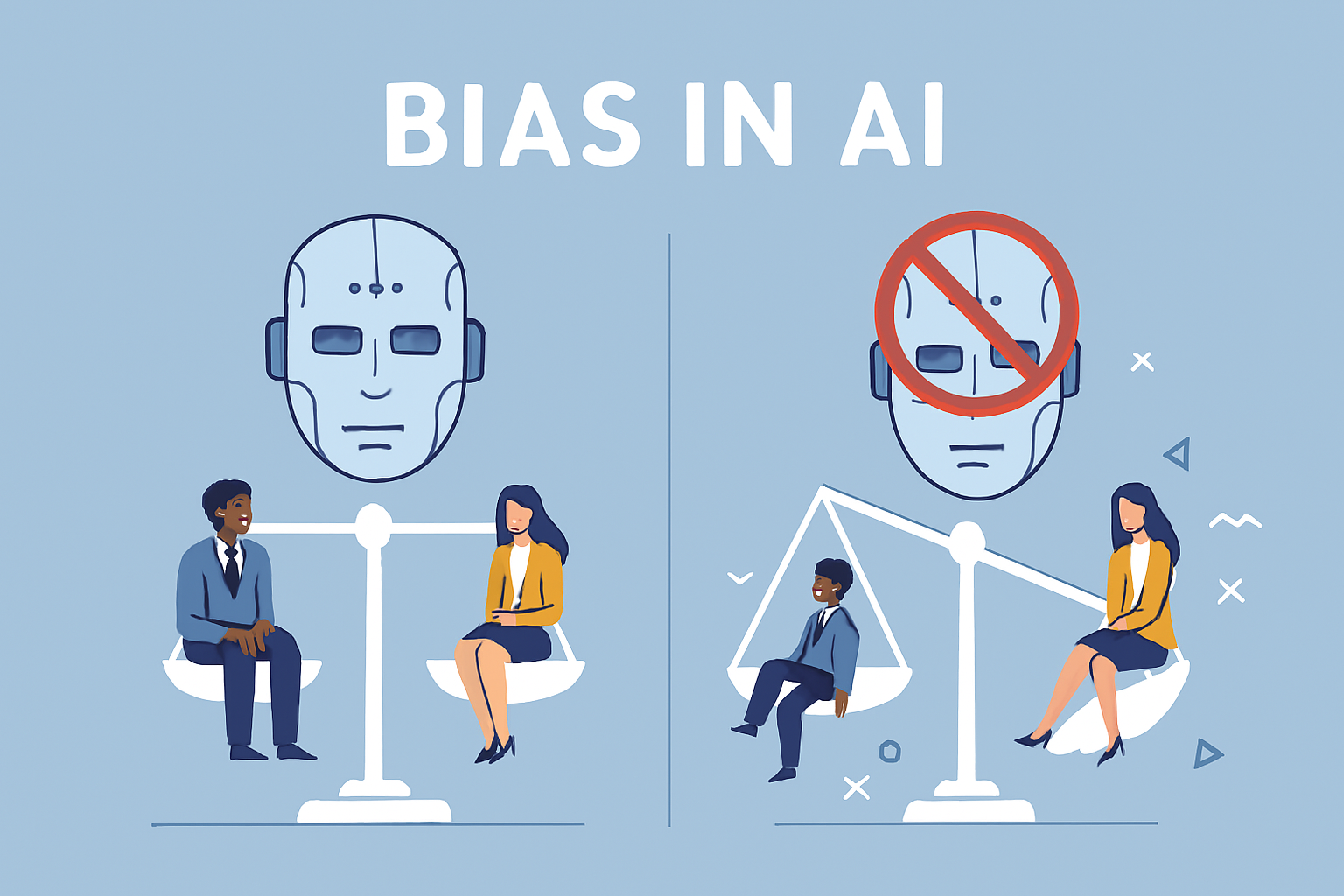

Bias in Outputs

AI models learn from massive amounts of text and data produced by humans. Unfortunately, that data reflects our social biases about gender, caste, religion, or nationality. So even if the model is not “intentionally” biased, its responses can mirror stereotypes or unfair assumptions.

For example, researchers in India recently found that when tested with culturally sensitive prompts, some AI models repeated stereotypes about certain communities. This happened not because the AI was malicious, but because its training data contained biased examples.

A biased prompt can worsen the problem. If you ask a question like, “Why are some groups less intelligent?” the model might try to answer rather than challenge the prejudice built into the question.

How to overcome it:

- Frame prompts neutrally and factually, avoiding language that assumes or implies bias.

- Test responses using multiple variations of the same prompt to check for fairness.

- Add ethical instructions in your prompt, such as “Avoid stereotypes or generalizations.”

- Encourage human review for sensitive outputs.

- Train AI teams to evaluate cultural and regional fairness, especially in diverse countries like India.

Bias may never be eliminated entirely, but thoughtful prompting can reduce its harm. As India’s AI ecosystem grows, fairness and inclusivity must be built into how we design and test our prompts.

Hallucinations & Misinformation

One of the biggest limitations of AI systems is their tendency to “hallucinate”, to produce confident but false information. A model might cite a law that doesn’t exist, invent a medical fact, or fabricate a source.

These mistakes can be serious. Recently, lawyers in several countries faced legal trouble after submitting AI-generated case citations that turned out to be fictional. In India, even courts have discussed the risks of using AI for legal research without verification.

The danger isn’t just that AI makes errors, it is that it sounds convincing when it does. If prompt engineers don’t include fact-checking or clear instructions, the AI’s fabricated details can quickly spread as misinformation.

Fun Fact

Some AI-generated responses invent completely fictional yet convincing details, like a fake Shakespeare poem or a mythical court case. While dangerous if used as fact, this same quirk has been used for creative storytelling, brainstorming, and even generating unique game plots.

How to overcome it:

- Always ask the model to clarify uncertainty: “If unsure, say so instead of guessing.”

- Verify any factual or legal statements manually before use.

- Use retrieval-based models or add real references to ground the output.

- Avoid multi-step prompts that expand on earlier incorrect responses.

- Treat AI as an assistant, not an authority, it can suggest ideas, but humans must validate them.

Prompt engineering is as much about controlling what not to say as it is about crafting what to say.

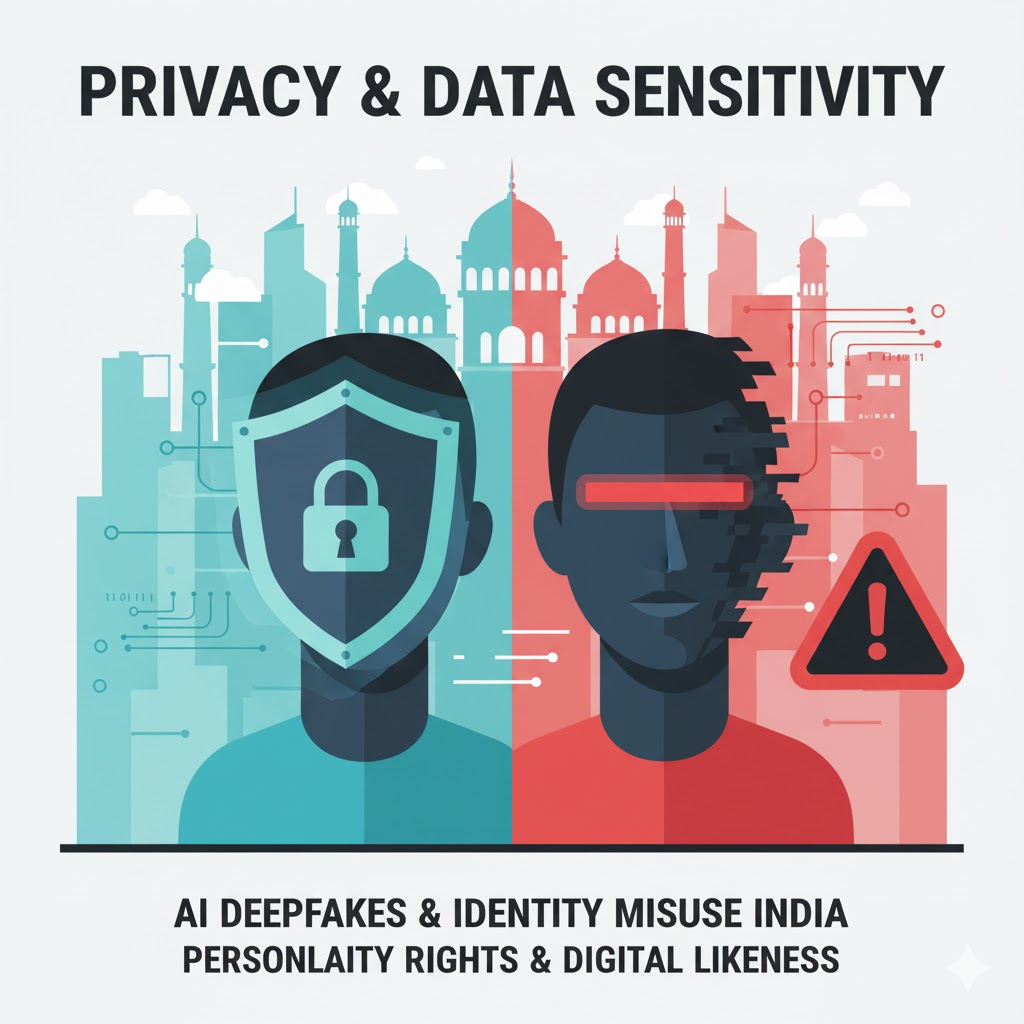

Privacy and Data Sensitivity

Prompts often include personal, confidential, or sensitive details. If those details are stored or reused, privacy can be at risk. This concern has grown sharply with the rise of AI-generated deepfakes and identity misuse in India.

In recent years, there have been multiple incidents of morphed images (deepfakes) of public figures and private individuals circulating online, created entirely by AI. Several Indian celebrities, including film actors, have approached courts to protect their digital likeness and “personality rights.” Religious institutions have also protested the misuse of AI-generated content that altered sacred imagery.

To counter these issues, India enacted the Digital Personal Data Protection Act (DPDP), 2023, which governs how personal data can be collected, processed, and shared. The Act, along with the Information Technology Rules (2021), requires platforms and developers to prevent misuse of personal data, including through AI systems.

How to protect privacy in prompt design:

- Never include real names, addresses, or confidential data in a prompt unless absolutely necessary.

- Anonymize or replace identifying details with placeholders.

- Inform users if their input might be stored or processed.

- Use encryption and secure data handling practices for any logged prompts.

- Regularly delete unused prompt histories or training data.

Privacy is not just a technical requirement, it’s an ethical responsibility. A single careless prompt could expose someone’s identity or personal story to systems beyond your control.

Responsible AI Use

Prompt engineering sits at the intersection of creativity, technology, and ethics. It’s not enough to know how to make an AI produce what you want; you must also think about whether you should. Responsible AI means using these tools with awareness of their social impact and potential misuse.

In India, policymakers have started discussing national AI safety standards under the IndiaAI Mission, and an AI Safety Institute is being established to guide ethical development. These moves signal a growing recognition that AI systems need governance and accountability, just like any other powerful technology.

Responsible prompting practices include:

- Keeping humans in the loop. Important decisions should never rely solely on AI output.

- Being transparent when content is AI-generated.

- Monitoring AI systems continuously for errors, bias, or drift over time.

- Encouraging ethical education among prompt engineers and developers.

- Reporting harmful or misleading AI behavior to the relevant teams or authorities.

Responsible AI isn’t a checklist,it is a mindset. Each prompt engineer plays a part in shaping how AI interacts with society fairly, honestly, and safely.

AI in News

In October 2025, Bollywood actor Hrithik Roshan successfully secured a court order from the Delhi High Court to remove unauthorized AI-generated content that misused his image and persona. This ruling highlights the growing importance of protecting digital identities against misuse in the age of AI. Read More.

Prompt engineering gives humans a new kind of influence. The ability to shape how machines think, reason, and communicate. But that power comes with moral weight. Bias, misinformation, and privacy violations are not technical bugs, they are ethical blind spots that must be addressed through conscious design and responsible use.

India’s efforts like the DPDP Act, IT Rules, and the upcoming AI governance frameworks are early steps toward balancing innovation with protection. As AI continues to evolve, prompt engineers must combine creativity with conscience. The goal isn’t just smarter AI it’s ethical AI.