Artificial Intelligence (AI) has become part of our daily lives, answering questions, writing content, generating images, and even helping in medical research. But sometimes, AI makes things up. It can confidently give you an answer that looks correct but is completely false. This phenomenon is called AI hallucination.

Think of it like this: if a human imagines or invents a story and presents it as fact, they’re “hallucinating” information. AI does something similar when it outputs wrong, nonsensical, or fabricated results while sounding convincing.

What Are AI Hallucinations?

An AI hallucination happens when an AI system produces incorrect, misleading, or made-up information that doesn’t match reality.

- In text-based AI (like ChatGPT), this might be a false answer, a fabricated quote, or even a non-existent source.

Examples:

- Asking an AI: “Who won the FIFA World Cup in 2022?”

Correct Answer: Argentina

Hallucination: “Brazil won the 2022 World Cup” (completely false). - Asking for a scientific explanation:

AI might invent a chemical formula that doesn’t exist. - Requesting references or citations:

The AI may generate fake research papers, authors, or links.

Did you know?

The META AI chatbot at times claimed that an actual event (the Trump rally shooting) didn’t happen despite the event being well-documented. This was a hallucination. Read More.

- In image AI (like DALL·E or Stable Diffusion), it could be extra fingers on a hand, a distorted face, or unrealistic objects.

Examples:

- A person with six fingers or extra arms in generated images.

- A cat with two heads when you asked for “a cute cat photo.”

- A car with impossible wheels or a clock with 13 hours.

- In speech or translation AI, it could mean mistranslating a word or inventing speech patterns.

Examples:

- A speech-to-text system mishearing “recognize speech” as “wreck a nice beach.”

- Translation AI inventing phrases not present in the original text.

The AI isn’t “lying” on purpose, it just predicts something that sounds or looks right but is actually wrong.

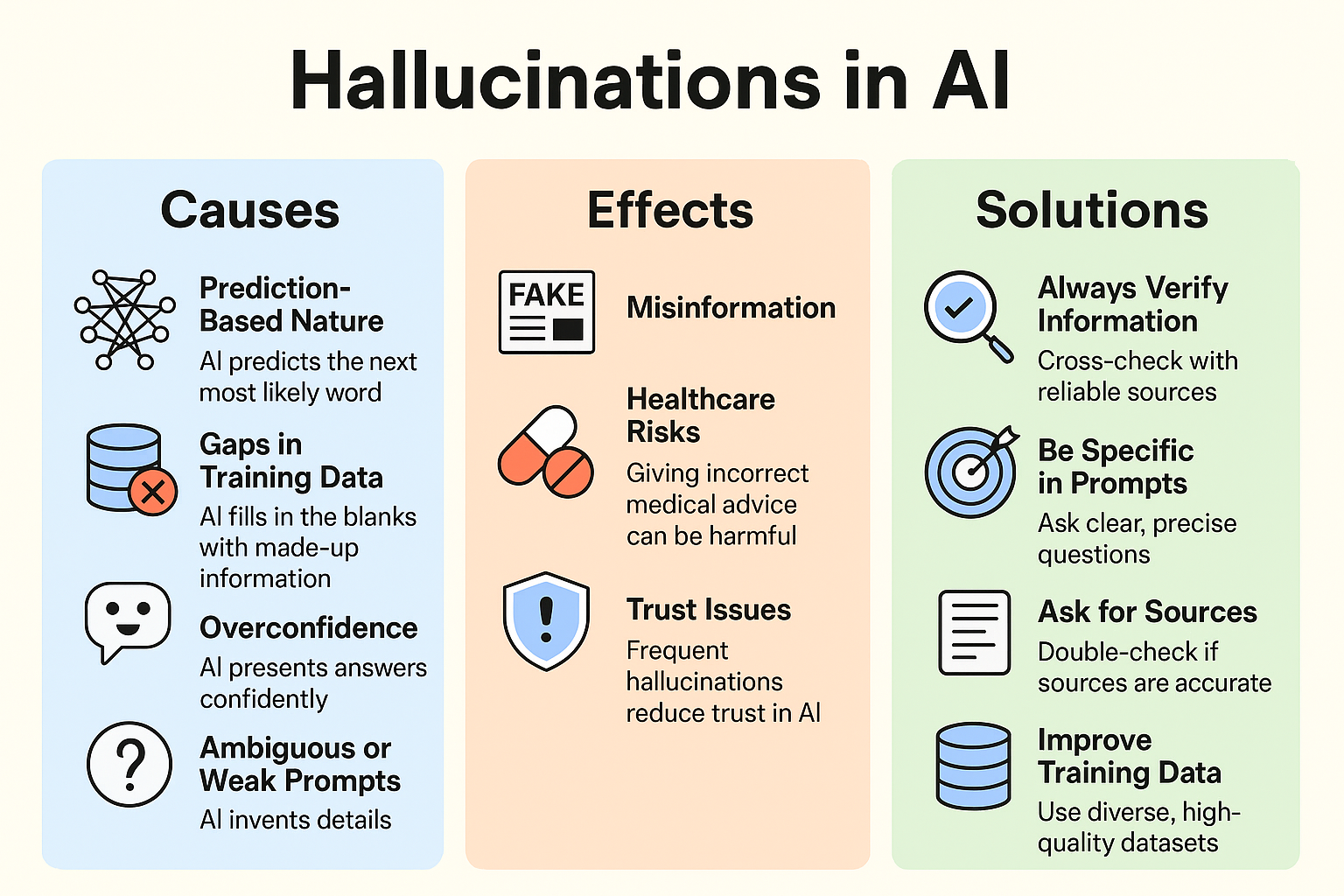

Why Do AI Hallucinations Happen?

Hallucinations are not random mistakes. They happen because of how AI is built and trained:

- Prediction-Based Nature

AI language models (like ChatGPT) don’t “know” facts. They predict the next most likely word based on training data. This prediction may sound fluent but doesn’t guarantee truth. - Gaps in Training Data

If the AI hasn’t seen enough examples of something (e.g., new events), it may “fill in the blanks” with made-up information. - Overconfidence

AI is designed to be helpful and sound natural, so it presents answers confidently even when wrong. - Ambiguous or Weak Prompts

If the user’s input is vague (“Tell me about the latest discovery in space”), the AI may invent details. - Lack of Real-Time Knowledge

Unless connected to the internet or updated frequently, AI relies only on past data. Example: Asking about an event in 2025 when the model was last trained in 2023. - Complexity in Image Generation

AI image models sometimes misinterpret information regarding human anatomy or physics, leading to distorted pictures.

How to Reduce or Prevent Hallucinations

While we can’t eliminate them 100% (yet), there are steps to reduce hallucinations:

For Users

- Always Verify Information: Cross-check with reliable sources (books, news sites, research papers).

- Be Specific in Prompts Instead of asking: “Tell me about physics.” Ask: “Explain Newton’s three laws of motion in simple words.”

- Ask for Sources: Then double-check if those sources exist and are accurate.

For Developers & Researchers

- Improve Training Data: Use diverse, high-quality, fact-checked datasets.

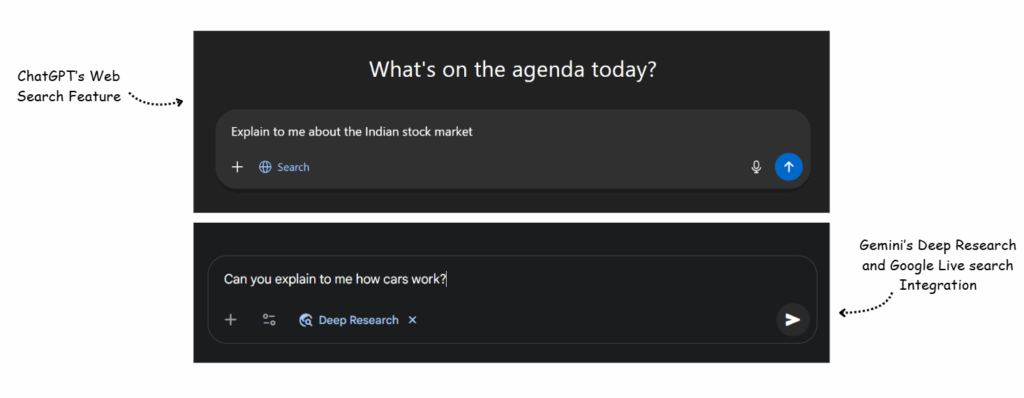

- Add Retrieval Systems: Combine AI with search engines or databases so it fetches real facts instead of guessing. (e.g., Google Gemini with browsing tools).

- Uncertainty Indicators: Teach AI to admit when it doesn’t know something instead of making it up.

- Human-in-the-Loop Systems: In critical areas like medicine or law, AI outputs should always be checked by experts.

- Regular Updates: Keeping models updated with new, verified information reduces errors.

Real-World Efforts to Tackle Hallucinations

- Google’s Bard (Gemini) and OpenAI’s ChatGPT are integrating live search features to pull accurate data.

- Healthcare AI tools often use hybrid models that combine machine learning with verified medical databases.

- Fact-checking systems are being built into AI pipelines to flag doubtful content before showing results.

Fun Fact:

Google’s Knowledge Graph is a fact-checking integration used within some AI models, to cross verify generated answers against structured databases to reduce hallucinations.

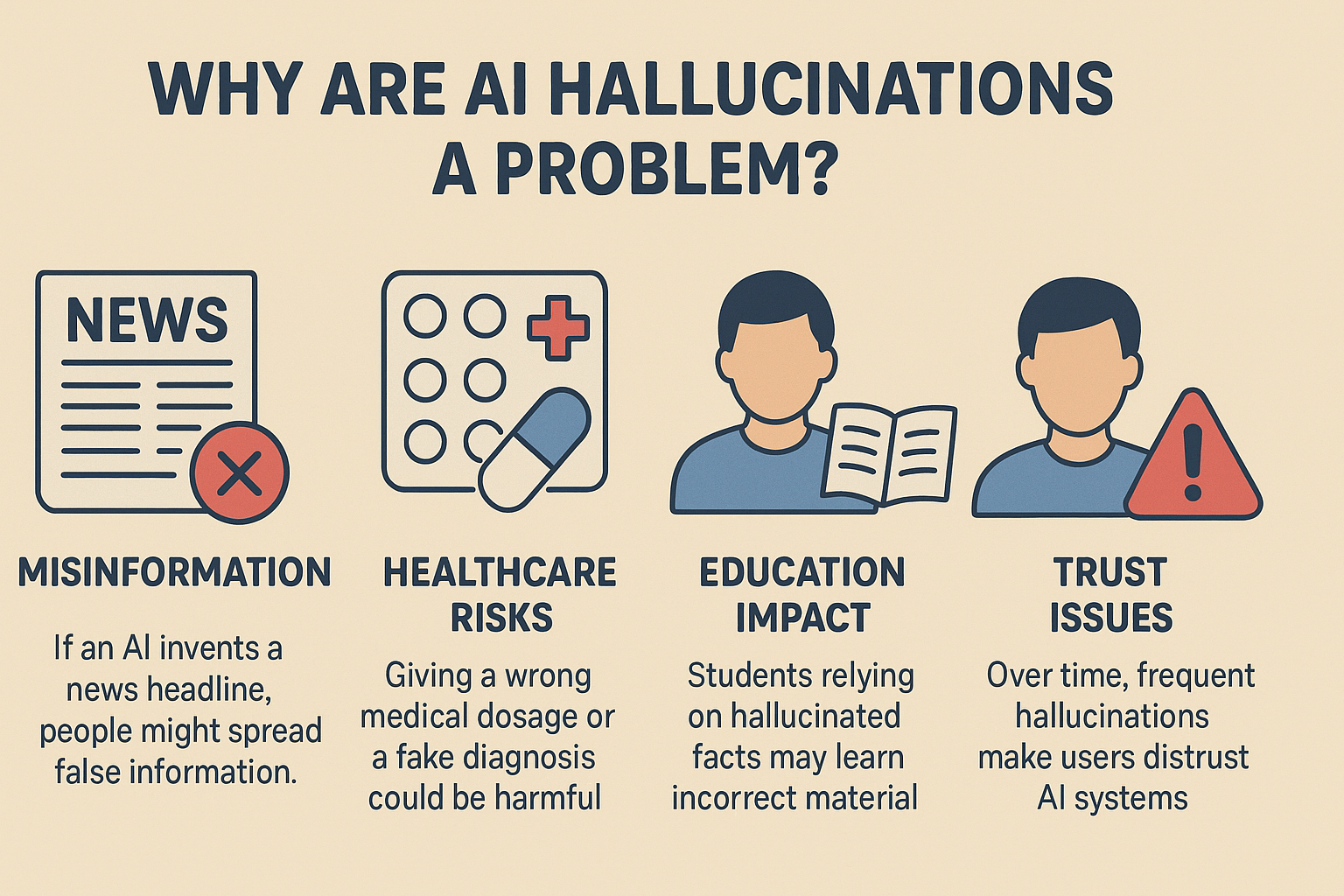

AI hallucinations happen when AI generates results that look correct but are false or nonsensical. They occur because AI predicts patterns rather than truly “understanding” reality. While harmless in casual chats, they can be dangerous in fields like education, law, and healthcare.

The good news? With careful design, constant updates, fact-checking, and responsible use, hallucinations can be reduced. The key is not to blindly trust AI but to treat it as a powerful assistant that still needs human judgment.