When you unlock your phone with your face, activation functions are secretly at work. Every time your phone recognizes your face, the AI inside is making countless tiny decisions, deciding which pixels matter, which features signal “you,” and which signals to ignore.

Deep learning, the technology behind this is inspired by how the human brain works. At the heart of every artificial neural network lies the idea of neurons passing signals forward. But just like in the brain, neurons in an artificial system need a way to make decisions, they cannot simply pass numbers without meaning. This is where activation functions come in.

Why Do Neurons Need to Make Decisions?

Imagine looking at a photo of a cat. Not every pixel in the image is important to identify it. Some pixels might belong to the background, while others might show fur or eyes. A neuron in a neural network needs to decide what is important in the data.

Just like our brain ignores unimportant sounds in a noisy room, artificial neurons use activation functions to filter and process signals and focus on useful patterns.

What Are Activation Functions?

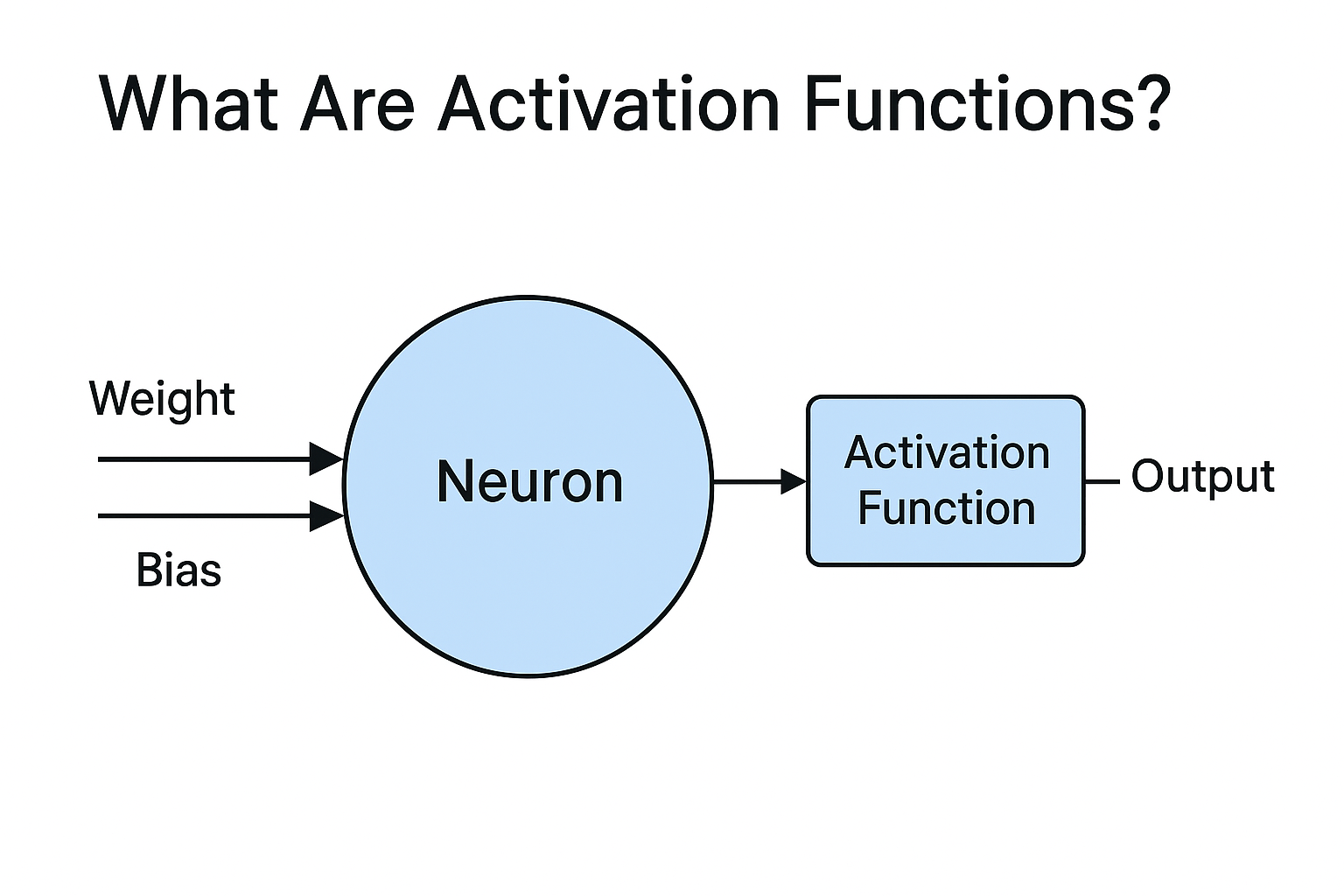

In a neural network, there are multiple layers: the input layer, hidden layers, and the output layer. Data flows from one neuron to another through these layers until a final output is generated. Each neuron processes the incoming data using weights, bias, and an activation function.

An activation function is a small mathematical operation applied to the output of a neuron before it moves to the next layer. It decides whether a neuron should:

- Fire strongly (pass information forward),

- Dampen the signal, or

- Block it entirely.

Without activation functions, a neural network would behave like a giant calculator — only performing basic operations like addition, subtraction, multiplication, and division. It would never be able to detect complex patterns such as recognising faces in images, identifying voices in audio, or understanding meaning in text.

How Activation Functions Work

An activation function takes the weighted sum of a neuron’s inputs and transforms it into an output that can be passed to the next layer. Mathematically, this can be represented as:

Output = Activation(Weighted_Sum)

Weighted_Sum = Σ (input × weight) + bias

For example, consider the following:

- Inputs: [2,3]

- Weights: [0.5, 0.8]

- Bias: 1

The neuron first calculates the weighted sum:

Weighted sum = 2*0.5 + 3*0.8 + 1 = 4.9

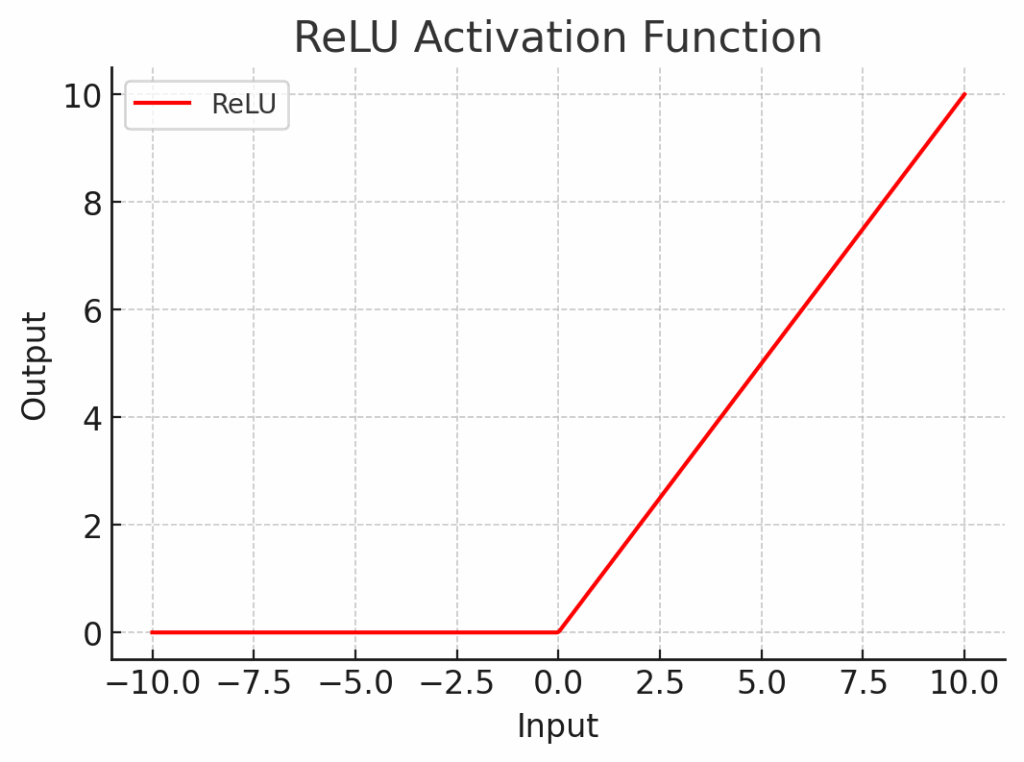

Next, the activation function is applied. Using ReLU:

Output = ReLU(4.9) = 4.9

Here, the activation function transforms the raw weighted sum into a meaningful signal for the next layer. In a cat recognition network, each neuron performs similar computations to decide whether the data suggests:

- “Yes, this looks like a cat”, or

- “No, probably not.”

Depending on the result, the signal is either passed forward or dampened/blocked.

This transformation is what allows networks to learn non-linear patterns, the kinds of patterns that help identify shapes, understand language, or predict future events.

Common Types of Activation Functions

Did you Know?

| Some modern networks automatically learn which activation function works best for each neuron — it’s like giving AI a choice of tools! |

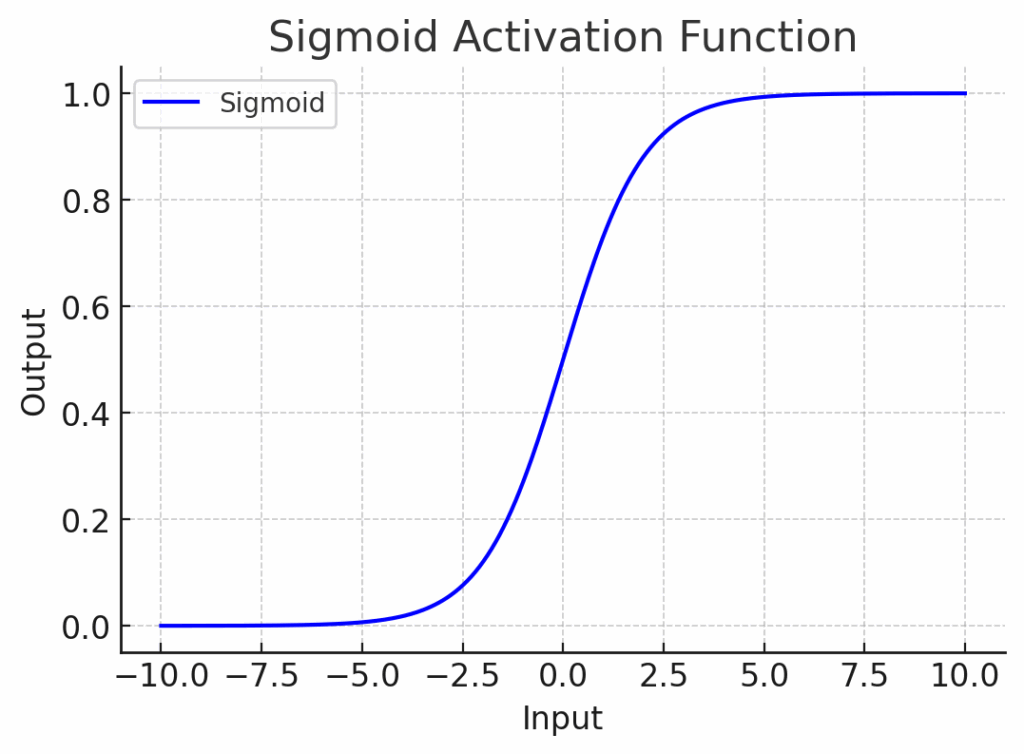

- Sigmoid Function: The sigmoid squashes any number into a range between 0 and 1.It is useful when we want probabilities. Example: Predicting whether a coin toss is heads or tails.

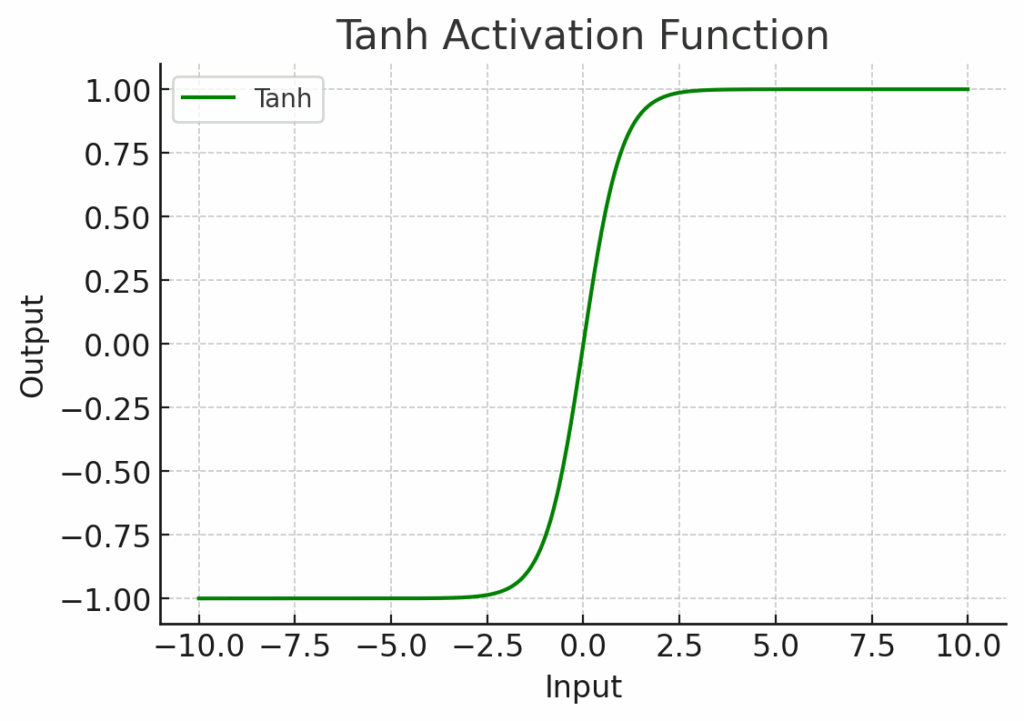

- Tanh (Hyperbolic Tangent): Similar to sigmoid, but outputs values between -1 and 1. It is better for data that can be negative or positive. Example: Analysing stock price changes (rise or fall), analysing temperature changes above/below zero.

- ReLU (Rectified Linear Unit): ReLU is simple: it outputs 0 if the input is negative, and the same value if it is positive. It is the most popular activation function in modern networks. Example: Used in image recognition tasks like identifying faces in photos.

Fun Fact

| ReLU (Rectified Linear Unit) became super popular because it made deep networks train faster and avoided the “vanishing gradient problem” that slowed older functions like sigmoid and tanh. |

How Do Activation Functions Help Learning?

Activation functions give networks the ability to learn complex, layered patterns. Without them a network could only solve very simple, linear problems. It would fail at recognising objects in pictures or understanding natural language.

With activation functions the network can detect shapes, textures, and meanings step by step. Each layer learns something more abstract, just like how our brain goes from detecting edges, to shapes, to whole objects.

Activation functions are what give neural networks their intelligence. They allow AI to recognize patterns, make predictions, and understand complex data: step by step, layer by layer. Without them, deep learning would not exist