Agentic AI is a kind of artificial intelligence that can make its own decisions and take action without needing step-by-step instructions from humans. Unlike traditional AI, which waits for a command or a prompt, an agentic system understands its goals, plans how to achieve them, and keeps improving over time. It can manage tasks, react to new situations, and even learn from its own successes and mistakes.

This makes agentic AI very different from the systems we use today. Most AI tools are reactive, they respond when you ask them something. Agentic AI, on the other hand, is proactive. It can decide what to do next, find the best way to do it, and adapt when things change.

But have you ever wondered how this actually happens? What makes an AI capable of such independence? The secret lies in a repeating process called The Agentic Cycle, a loop that every agent goes through as it interacts with the world. This cycle helps it sense what’s going on, think and plan its next move, act on those plans, and then learn from what happens. Step by step, this cycle helps an agent grow smarter, more capable, and more aligned with its goals.

From Reactive to Agentic

Traditional AI systems are reactive. They perform tasks when told to, like answering a question, labelling an image, and translating text. Each response is a reaction to a direct input. Agentic AI, in contrast, is goal-driven. It doesn’t just wait for instructions, it can interpret a goal, plan the steps required, execute them, and learn from the outcome.

For example:

- A chatbot answers when you ask for meeting times.

- An agentic assistant finds open slots in everyone’s calendars, sends invites, and reschedules if a conflict appears.

This difference from responding to acting is the foundation of Agentic AI.

The Core Components of Agentic AI

Behind every agentic system are several interlinked components that together create autonomy. Each plays a distinct role in how the AI understands, decides, and acts.

1. Perception: Understanding the World

Before acting, the AI must perceive what’s going on. Perception is how it interprets inputs. Whether those come as text, speech, images, structured data, or even signals from external applications.

Modern Agentic AI can combine multiple forms of input, a process known as multimodal perception. For example, it might read a report, analyze a chart, and interpret the tone of a conversation all at once.

This ability to understand complex environments forms the starting point of intelligent behavior.

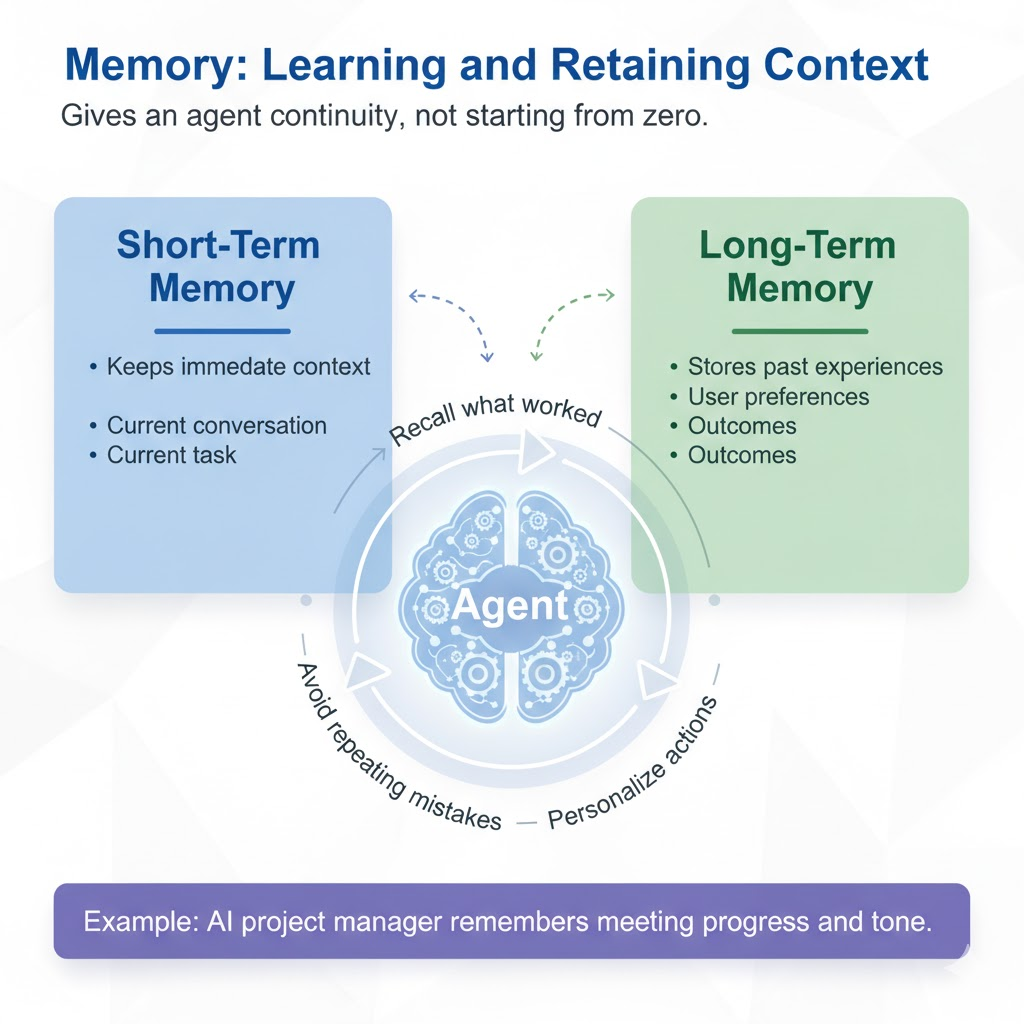

2. Memory: Learning and Retaining Context

Memory gives an agent continuity, which is the sense that it’s not starting from zero every time. There are generally two kinds of memory in agentic systems:

- Short-term memory: Keeps immediate context about the current conversation or task.

- Long-term memory: Stores past experiences, user preferences, and outcomes.

With memory, an agent can recall what worked before, avoid repeating mistakes, and personalize its actions. For example, an AI project manager that remembers the progress and tone of last week’s meeting can start the next one where things left off.

3. Reasoning and Planning: Thinking and Deciding

This is the brain of Agentic AI. Here, the system interprets goals, breaks them into smaller subtasks, and decides the best way to reach the desired outcome. It uses reasoning to connect information, evaluate options, and plan sequences of actions.

For example: When given the goal “optimize our social media engagement,” the AI might plan to:

- Collect analytics data.

- Identify patterns.

- Draft improved posting schedules.

- Generate reports with recommendations.

This chain of reasoning, moving from abstract intent to concrete steps is what gives agentic systems direction and structure.

4. Action and Execution: Doing Things

After planning, the AI must act. Action involves interacting with the external world, whether through APIs, applications, or devices. Execution can mean sending an email, querying a database, running code, or adjusting a digital process. The key is that the AI doesn’t stop at suggesting what to do.It does it.

For example, a content agent could draft an article, check it for tone and accuracy, and then publish it automatically once approved. This step transforms AI from a tool into an active collaborator.

5. Feedback Loop: Learning from Results

Every intelligent agent needs feedback. Once it acts, the system observes the results and adjusts accordingly. This process of learning from outcomes is what makes the AI smarter over time. If an action produces a poor result, the system updates its internal model or strategy.

For example, if an AI sends an email campaign that performs poorly, it can analyze the open rates, adjust the subject lines, and improve future campaigns automatically. Feedback closes the loop, creating a continuous cycle of improvement.

The Agentic Cycle

At the core of every agentic system lies a repeating cognitive loop:

Environment → Perception → Reasoning → Action → Feedback → Updated Knowledge

- Environment: The outside world the AI operates in (data, users, systems, or surroundings).

- Perception: The AI gathers data and interprets it like seeing, hearing, or reading.

- Reasoning: The AI processes the information, plans actions, and makes decisions.

- Action: It carries out the chosen steps to achieve its goal.

- Feedback: The system receives the results of its actions and measures success or failure.

- Updated Knowledge: The AI stores what it has learned to improve its next decisions.

This loop runs continuously, allowing the AI to adapt dynamically. Just like humans, the system becomes more capable through repeated experience.

The Technologies That Power Agentic AI

Several modern technologies make this level of autonomy possible:

- Large Language Models (LLMs): These serve as the reasoning and communication core. They help the system understand language, plan actions, and make decisions.

- Memory Architectures: Vector databases or knowledge graphs allow the AI to store and retrieve long-term context efficiently.

- Autonomous Agent Frameworks: Tools like LangChain, AutoGPT, or OpenDevin let developers build AI that can plan and execute multi-step goals.

- APIs and Integrations: Enable agents to connect with real-world tools like browsers, spreadsheets, emails, databases.

- Reinforcement Learning and Retrieval-Augmented Generation (RAG): Help the agent improve decisions and ground its responses in verified data.

Together, these layers turn static models into living systems that perceive, decide, and act intelligently.

Real-World Examples of Agentic AI in Action

To see how all this fits together, let’s look at some real scenarios:

- AI Research Agent: Conducts literature reviews, summarizes papers, and suggests experiment designs.

- Customer Support Agent: Reads support tickets, finds relevant fixes, and updates the CRM automatically.

- Business Operations Agent: Monitors dashboards, flags anomalies, and runs corrective scripts.

- Creative Assistant: Writes, revises, and publishes marketing content with minimal human guidance.

Each of these systems cycles through perception, reasoning, action, and learning — becoming more effective with every iteration.

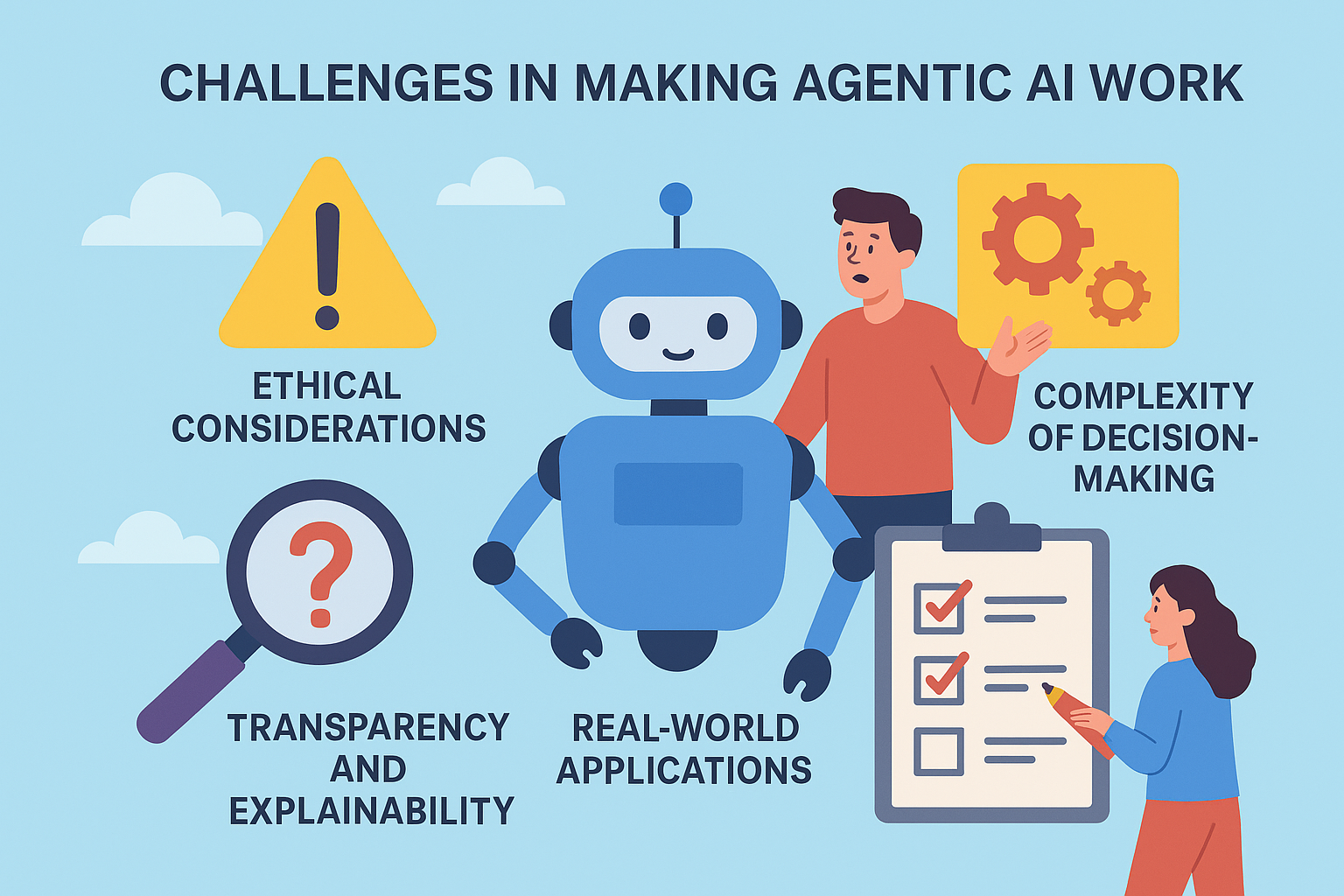

Challenges in Making Agentic AI Work

Building agentic AI systems that can act autonomously, make decisions, and learn from feedback is one of the most complex challenges in technology today. While the promise of AI agents is immense, the path to reliable, safe, and adaptable autonomy is riddled with technical, ethical, and societal obstacles.

1. Alignment and Control: Ensuring that autonomous agents act in ways that align with human values and intentions is incredibly hard. Even small misalignments in goals or incentives can cause unintended, large-scale outcomes when the agent operates independently.

2. Context Understanding: Agents must grasp not only factual data but also the nuances of human context such as emotions, cultural subtleties, and situational awareness. Achieving this level of understanding requires massive, multimodal reasoning capabilities that are still under development.

3. Memory and Adaptation: For true autonomy, agents must remember past actions, learn from them, and improve over time. But long-term memory management and safe adaptation raise deep technical challenges, from preventing “drift” to ensuring security and transparency in how agents evolve.

4. Accountability and Transparency: When AI agents make decisions autonomously, responsibility becomes blurred. Who is accountable, the developer, the deployer, or the AI itself? Designing traceable, interpretable systems that explain why decisions are made is a central challenge.

5. Infrastructure and Integration: Deploying agentic AI at scale requires vast computational resources and reliable integration across cloud systems, data pipelines, and real-world hardware. The complexity of such ecosystems can lead to instability, inefficiency, and high costs.

6. Ethics and Societal Impact: As AI agents become capable of negotiation, creation, and influence, they raise questions about consent, manipulation, and fairness. Establishing ethical boundaries and global governance structures is critical to ensure these systems serve human progress.