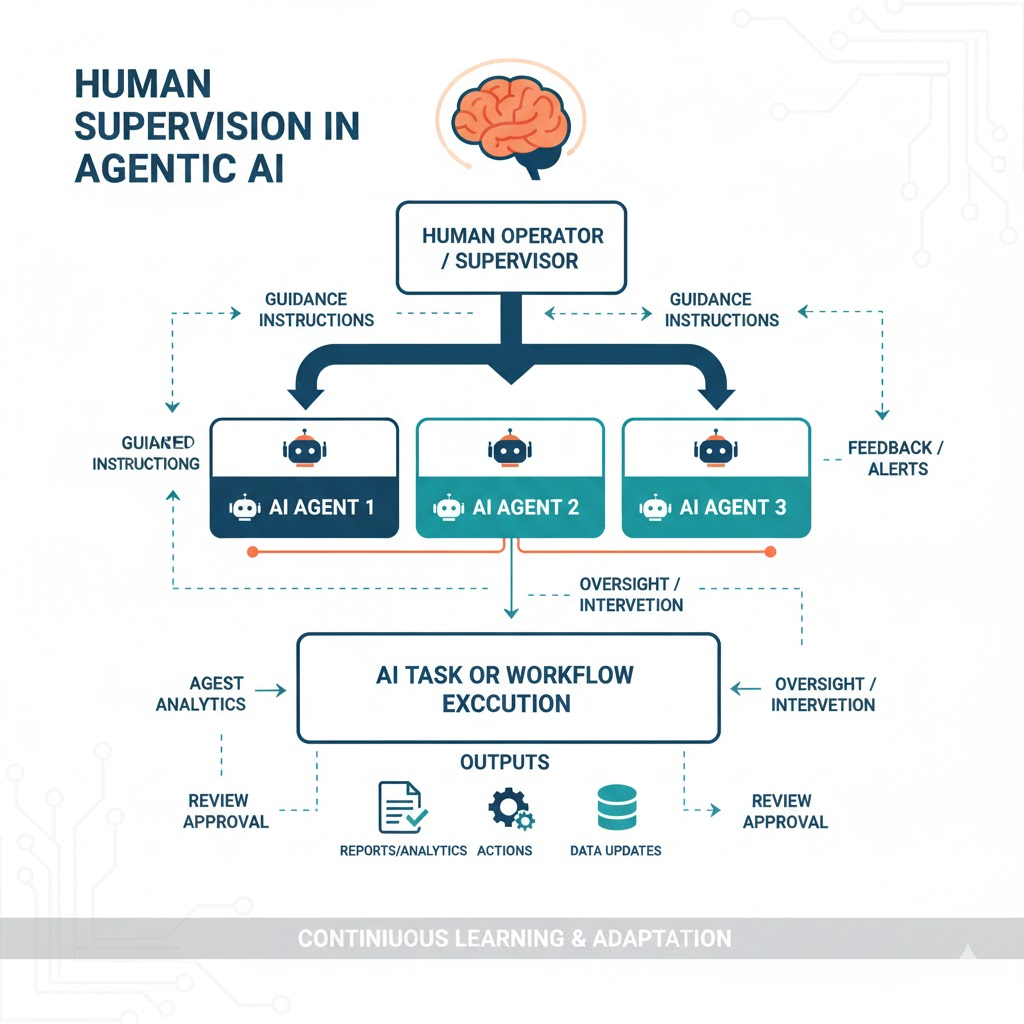

No matter how advanced an AI agent becomes, it cannot operate responsibly or reliably without human oversight. People guide, correct, and shape the agent’s understanding of the world, ensuring its actions remain grounded in real-world context and ethical reasoning.

Without this guidance, AI can easily hallucinate, make flawed assumptions, or act on incomplete information. Human feedback helps close these gaps, correcting oversights and refining the agent’s decision-making.

In every agentic system, the balance between autonomy and supervision is key. Some agents work entirely under human direction, while others act more independently, with humans stepping in only when needed. This range of collaboration is known as “human in the loop” (HITL), a principle that ensures AI acts in ways aligned with human goals and values.

Why Humans Still Matter

Even the smartest agent can make errors or misjudge context. Humans bring qualities that AI lacks such as judgment, empathy, and moral reasoning. Keeping people involved ensures that agents act responsibly and that outcomes remain meaningful.

Humans provide:

- Quality control: Checking and refining agent outputs.

- Ethical oversight: Preventing bias, harm, or misuse.

- Learning feedback: Teaching agents what “right” looks like through correction.

Examples:

- In AI-assisted hiring, recruiters validate shortlists generated by algorithms to avoid bias.

- Doctors use AI diagnostics as support but always confirm final results.

- Autonomous vehicles allow humans to override when conditions are uncertain.

Human involvement turns agents from tools into trusted collaborators.

Levels of Human Control

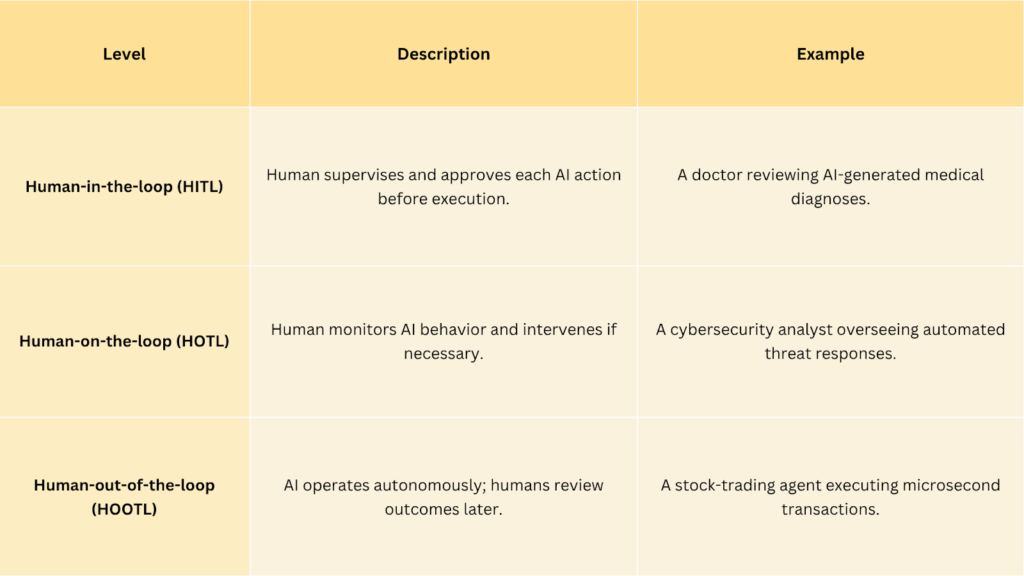

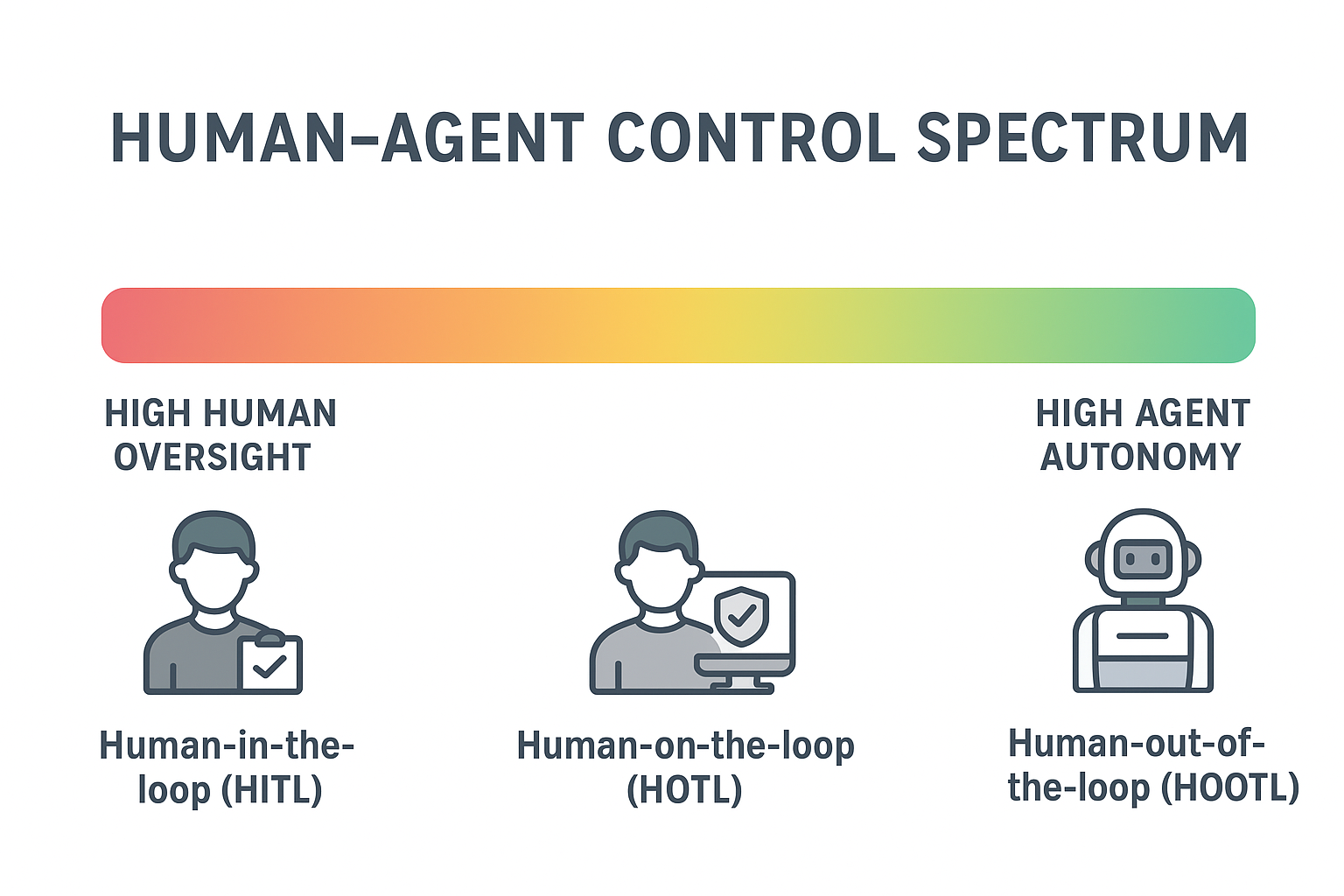

The relationship between humans and agents varies across systems from full manual control to total autonomy. This is described through three key models:

These levels define how autonomy and oversight balance in different use cases — the higher the risk, the tighter the human control.

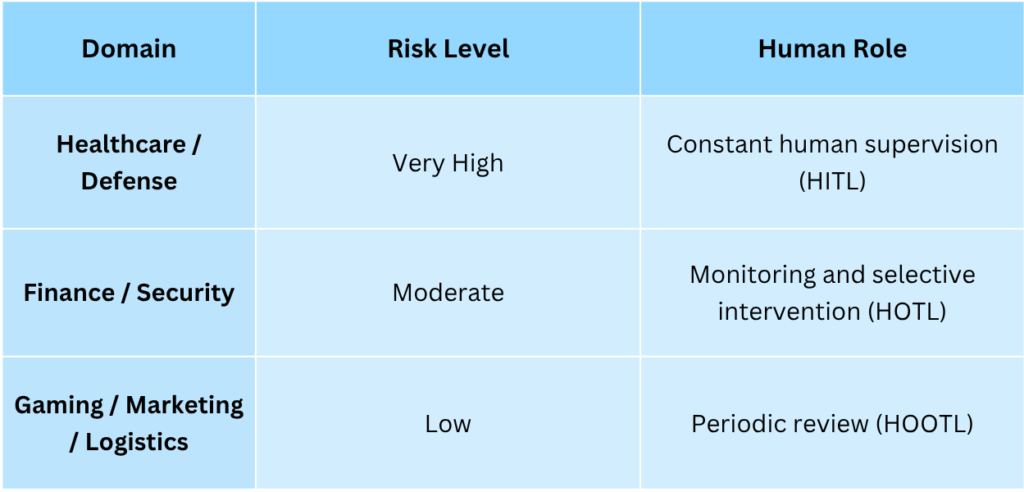

When to Keep Humans in the Loop

Not every system needs the same degree of human supervision. The right level depends on the domain, risk, and consequence of errors.

This framework helps developers and policymakers decide when autonomy is safe and where human judgment must remain central.

Collaboration, Not Competition

Agentic AI is not about replacing humans, it’s about increasing human capability. When designed well, agents become partners that enhance creativity, speed, and precision.

Human strengths: context, empathy, ethics, strategic thinking.

Agent strengths: data processing, consistency, and scalability.

Together, they create collaborative intelligence.

Examples:

- In scientific research, agents analyze massive datasets, while humans interpret the insights.

- In creative industries, AI tools generate concepts that designers refine into final works.

- In customer service, agents handle routine queries, while humans focus on empathy-driven cases.

The result is not automation, but augmentation where people are empowered by intelligent systems.

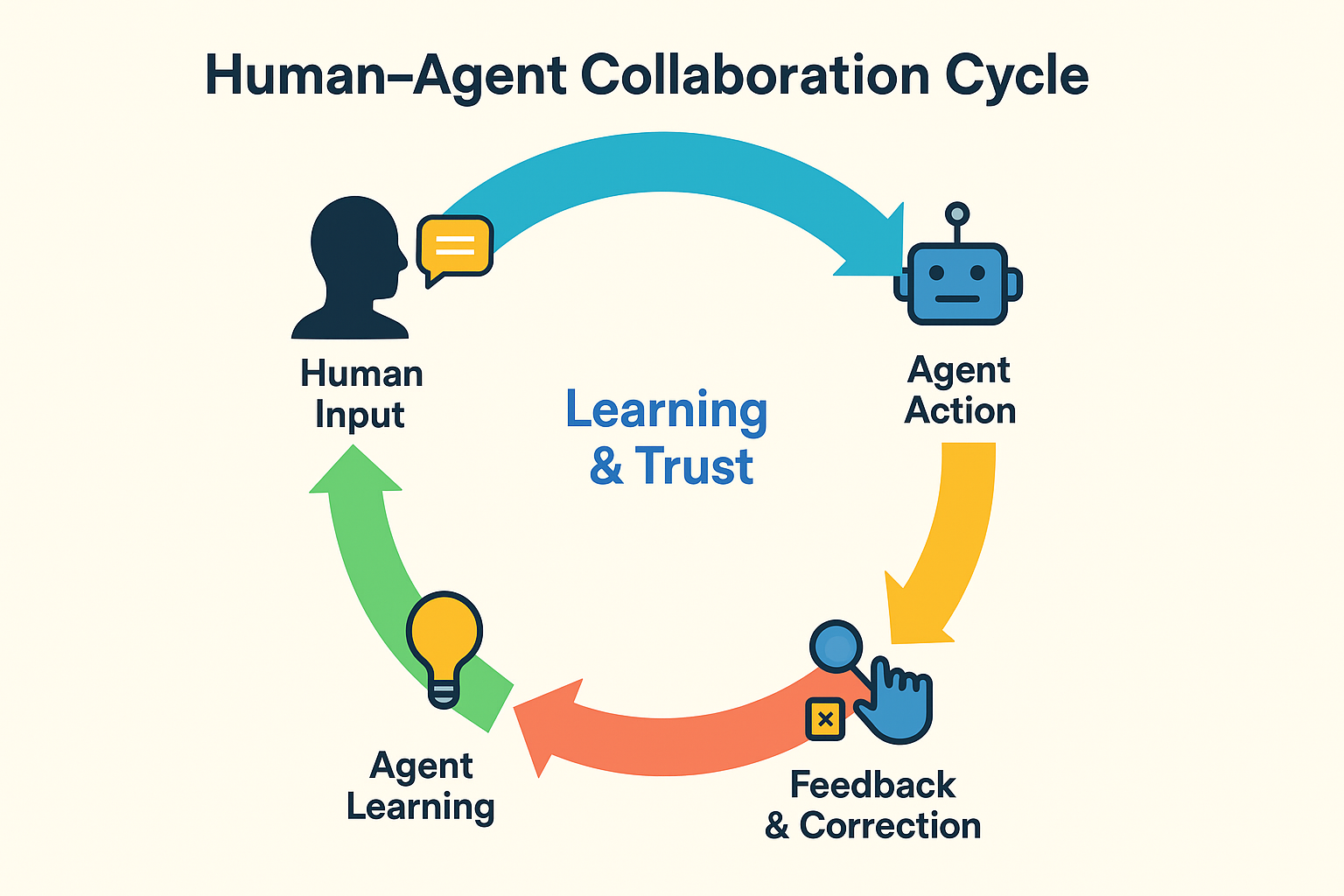

Human Feedback Loops in Learning

Humans don’t just supervise AI; they teach it. Modern agents learn from reinforcement learning with human feedback (RLHF), a process where people rate or correct AI behavior to help it understand what is desirable.

Example: During the development of models like ChatGPT, human evaluators compared multiple responses, guiding the system toward better reasoning and tone. This loop of feedback and improvement is what transforms a capable system into a useful, safe, and human-aligned agent.

Balancing Autonomy and Trust

The real challenge is deciding how much freedom to give the agent and how much humans should trust it. If the agent acts too independently, users may lose control or confidence. If humans interfere too often, the system loses efficiency and learning potential.

This balance is achieved through calibrated trust between systems that are transparent, explainable, and clear about when they need human intervention. Trust doesn’t mean blind acceptance; it means knowing when to rely on the agent and when to step in.

Did you Know?

Even the most advanced AI systems can experience “hallucinations,” where they confidently generate false information. This is a key reason why human oversight remains essential.

The Future of Symbiotic Intelligence

The future of Agentic AI lies in symbiotic intelligence, a partnership where humans and agents continuously learn from each other. Agents will become skilled at knowing when to defer to humans. Humans, in turn, will learn to interpret agent reasoning, correct it thoughtfully, and delegate with confidence.

This isn’t about control or competition, it is about co-creation, where human insight and machine intelligence combine to solve problems no single mind could manage alone.