In the previous article, we learned how Artificial Intelligence, or AI, is helping the world in many amazing ways. From improving healthcare and education to helping scientists and making daily tasks easier, AI is becoming a big part of our lives.

But while AI has made many things easier for us, it’s not perfect. Like every tool, AI has its limits. It’s important to understand what AI cannot do, just as much as what it can do. Let us explore the limitations and risks of AI.

AI Does Not Truly Understand or Feel

AI can answer questions, solve problems, and even talk like a human. This is possible because of a special technology called Natural Language Processing, which helps AI understand and use human language. But even though it may sound smart, AI doesn’t actually understand the meaning of what it’s saying.

It doesn’t have feelings or emotions. For example, if you write a sad story about losing a pet, an AI tool can read it and tell you what it’s about but it won’t actually feel sad. It cannot feel happy, excited, scared, or anything else, because it’s just a machine.

AI also cannot make moral or fair decisions. It doesn’t have human judgment or a sense of right and wrong. That is why in important areas like healthcare, law, education, and government, humans must always stay in control. AI can give suggestions or ideas, but only humans can decide what is truly the right thing to do.

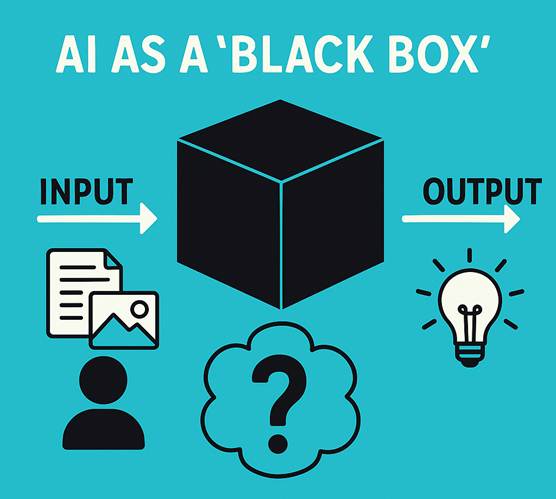

AI Often Works Like a “Black Box”

Have you ever heard of a “black box”? In AI, a black box means that we do not fully understand how the AI reached its answer. Even the people who created the AI sometimes can’t explain how it made a decision.

For example, if an AI says that a student might fail a subject, it may have used many patterns in the student’s data like test scores, attendance, and more. But it will not clearly explain which part was the most important. Teachers and parents may not know exactly why the AI made that prediction.

This is called the black box problem, and it can make it hard to trust AI in serious situations, especially if we do not understand how it made its choice.

AI Needs Clean and Correct Data

AI learns by looking at data, lots and lots of it. But it can only learn well if the data is clean, correct, and fair. If the data is messy or wrong, then the AI might learn the wrong things and give wrong answers.

Imagine training an AI to help with job hiring, but the data only includes people from one city or one group. The AI might then think that only those kinds of people should be hired, and leave out others. This is called bias, and it’s unfair.

AI In News

Recently, an AI tool called Grok started giving negative and harmful responses for nearly 16 hours. This happened because it began using user posts from the internet in the wrong way. This shows how important it is to control what AI learns from and how it behaves.

You can read more about it here.

That’s why it’s very important to give AI the right kind of data. If we’re not careful, AI can end up giving unfair results in places like schools, hospitals, or offices. Before AI learns, people must check the data and make sure it’s good and balanced.

AI Can Be Misused

Artificial Intelligence can be very helpful when used properly. But like any powerful tool, it can also be misused. This means people might use AI in ways that cause harm, cheat systems, or spread false information.

Some people use AI to make fake photos or videos that look real, these are called deepfakes. Others may use AI to cheat in exams by getting answers without studying. Some even use AI to create fake news, or to trick people online into giving away personal details like passwords or bank information.

In more serious cases, AI can be used to perform cyberattacks or steal private data. For example, someone might pretend to be another person using AI-generated voices or messages.

Because of these risks, it is very important to talk about something called AI ethics.

This means using AI safely, responsibly, and fairly. As AI becomes more powerful, people, including students, teachers, developers, and leaders must take AI ethics seriously. There are now rules, guidelines, and even laws being made to help stop the misuse of AI and protect people.

Did you know?

Some countries are creating AI safety laws to make sure AI is used fairly, just like traffic rules are made to keep roads safe!

AI is a smart and helpful tool, but it also has limits. It cannot feel, it cannot truly understand, and it sometimes gives answers we cannot explain. It also needs good data to work well and can be misused if we’re not careful.

As AI becomes a bigger part of our world, we must remember to use it wisely. Humans must stay in charge, ask the right questions, and make the final decisions. When used with care and responsibility, AI can do great things, while we stay safe, fair, and in control.