When we look at the world around us, most information does not arrive in isolation. Words form sentences, notes create melodies, and spoken sounds carry meaning only when we consider what came before. To teach machines to handle such sequences, we use Recurrent Neural Networks (RNNs).

There are different types of neural networks. The most popular of the these are:

- Convolution Neural Network (CNNs)

- Recurrent Neural Networks (RNNs)

In this article we will learn about Recurrent Neural Networks. RNNs are one of the most fascinating ideas in deep learning because they give computers a sense of memory. This memory allows them to understand not just single inputs, but entire sequences of information over time.

Why Memory Matters in AI

Imagine trying to understand a sentence word by word without remembering the earlier words. If I said, “The cat sat on the…” and then stopped, you would likely guess that the next word is “mat.” This is possible because your brain does not treat each word as an isolated piece of information; it remembers the context and builds meaning step by step.

Most traditional neural networks cannot do this. They process information in a fixed way: an input goes in, an output comes out, and then the network starts fresh for the next input. This works well for images or numbers, but when information comes as a sequence where each step depends on what came before they fall short.

This is exactly where Recurrent Neural Networks (RNNs) stand out. RNNs are built to handle sequential data by carrying information forward, almost like a memory. This allows them to understand patterns that stretch across time, making them useful in many real-world situations such as:

- Text: predicting the next word in a sentence or completing a paragraph.

- Speech: recognising and interpreting spoken language.

- Music: generating melodies or rhythms note by note.

- Stock prices: analysing time-series data to spot patterns or trends.

Did you Know?

| Predictive text on smartphones, like when your keyboard guesses the next word, was one of the earliest consumer-facing applications of RNNs. |

By remembering what has happened before, RNNs give artificial intelligence the ability to deal with information in a way that feels much closer to human thought.

How RNNs Remember Past Steps

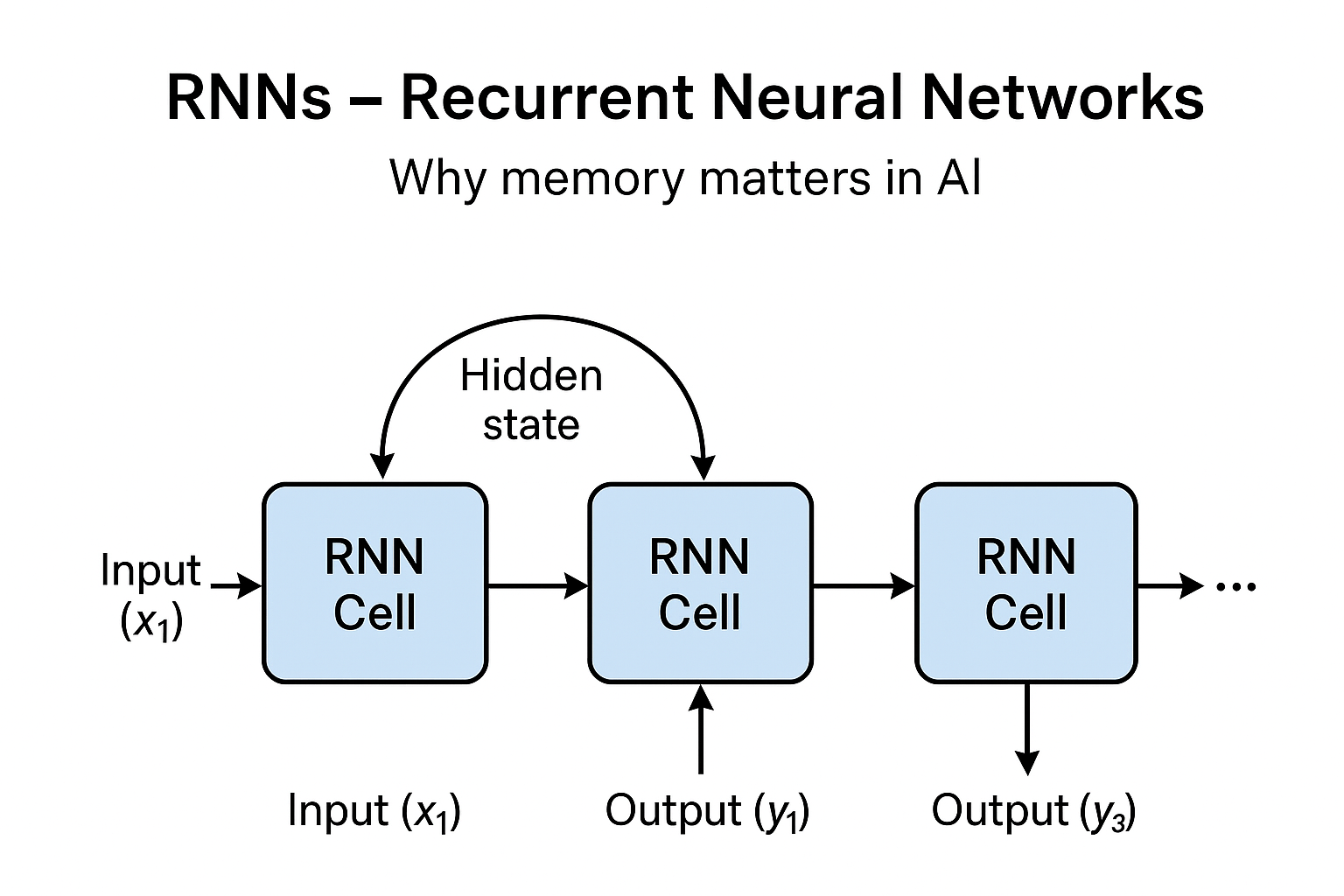

Unlike regular neural networks that process input and then forget it, RNNs feed their own output back into themselves. This loop allows them to carry information from one step to the next.

Think of it like passing a secret message along a chain: each step in the sequence adds its part, but the memory of earlier steps is still carried forward.

Each Recurrent Neural Networks cell passes a hidden state (its memory) forward, allowing the network to “remember” what happened before.

RNNs in Action

- Language and Text: RNNs can complete your sentences, translate between languages, or even generate poetry. For example, predictive keyboards on phones use RNN-like models to suggest the next word.

- Music Generation: Musicians and researchers use RNNs to create original compositions. By feeding a network sequences of notes, it can generate entirely new music that follows familiar patterns.

- Speech Recognition: Virtual assistants like Siri or Alexa rely heavily on RNN-inspired architectures to process your voice and understand what you mean.

- Time-Series Forecasting: In finance, RNNs analyse past stock movements to predict possible future trends.

AI in News

| RNNs have been used in analysing ECG signals to detect heart conditions early, which has been highlighted in medical AI research news. Read more |

Simple RNNs vs. LSTMs

A challenge with simple RNNs is that their memory fades quickly and they struggle to remember things from far back in a sequence. This is known as the vanishing gradient problem.

To solve this, researchers created Long Short-Term Memory networks (LSTMs). LSTMs are a special kind of RNN that can hold onto information for much longer. They are behind much of today’s progress in natural language processing and speech recognition.

Recurrent Neural Networks mark a shift in how machines think. From recognising pictures with CNNs to understanding sequences with RNNs, deep learning now has the power to work with time, memory, and context. Whether it is predicting the next word in your text, composing a symphony, or interpreting speech, RNNs bring computers closer to how humans naturally process information.