Natural Language Processing (NLP) is the foundation of how machines understand and interact with human language, whether it’s written, spoken, or typed. It is a vital branch of Artificial Intelligence (AI) that powers many everyday technologies, from real-time translation and voice assistants to search engines and AI chatbots.

At its core, NLP enables computers to interpret not just the words we use, but the meaning, tone, and intent behind them. For instance, when an email system filters spam, it’s performing text classification. When an app translates “break a leg” while keeping its idiomatic meaning intact, that’s machine translation. And when a tool detects frustration in a customer review or condenses a long article into a few key sentences, it’s using sentiment analysis and text summarization.

These capabilities are made possible by a series of structured techniques such as tokenization, part-of-speech tagging, and word embeddings, that help machines break down and analyze language. Combined with powerful NLP tasks, these techniques form the backbone of how AI systems read, interpret, and respond to human communication in ways that feel increasingly natural and intelligent.

Techniques Used in Natural Language Processing

The techniques of NLP are the tools and methods that help computers understand human language in structured ways. Think of them as the “grammar lessons” and “comprehension skills” that teach AI how to read, listen, and respond.

Let’s look at the most important techniques:

1. Tokenization

Before a machine can analyze text, it needs to break it down into smaller pieces: words, phrases, or symbols. This process is called tokenization.

For example, the sentence “NLP makes computers understand language” becomes tokens like [“NLP”, “makes”, “computers”, “understand”, “language”]. This helps the model handle text systematically.

2. Stop Word Removal

Words like “is,” “the,” “of,” and “and” appear frequently in language but don’t add much meaning. NLP models often remove these to focus on the important parts of a sentence, improving processing speed and accuracy.

3. Stemming and Lemmatization

These methods are used to reduce words to their root forms.

- Stemming cuts off word endings (e.g., “running” → “run”).

- Lemmatization goes deeper by considering grammar and meaning (e.g., “better” → “good”).

This helps the system recognize that “run,” “running,” and “ran” refer to the same concept.

4. Part-of-Speech (POS) Tagging

In this step, the system identifies each word’s grammatical role, noun, verb, adjective, and so on. For instance, in “The cat sits on the mat,” the word “cat” is tagged as a noun, and “sits” as a verb. Part of Speech tagging helps the machine understand sentence structure and context.

5. Named Entity Recognition (NER)

Named entity recognition helps Natural Language Processing identify real-world entities such as names, places, organizations, or dates. For example, in “Apple released the iPhone in 2007,” the system detects “Apple” as an organization and “2007” as a date. This is widely used in news analysis, data extraction, and search engines.

6. Sentiment Analysis

This technique determines the emotion or tone behind a text: positive, negative, or neutral. For instance, “This movie was amazing!” is positive, while “I hated the ending” is negative. Companies use this to study customer feedback and social media sentiment.

7. Word Embeddings and Vectorization

Computers can’t “feel” words they understand numbers. Word embeddings convert words into numerical form so machines can measure how related they are. For example, “king” and “queen” would have similar vector representations because they share meaning. This allows AI systems to understand relationships between words.

8. Dependency Parsing

This technique maps how words in a sentence relate to one another. In the sentence “The student who studied hard passed the exam,” dependency parsing helps the system see that “student” is linked to “passed.” It’s crucial for understanding complex sentence structures and context.

Tasks of Natural Language Processing

Now that we’ve seen how machines learn language, let’s explore what they can do with it. These are the tasks of NLP, ranging from simple analysis to full-fledged conversation.

1. Text Classification

NLP can automatically assign categories or labels to text.

Examples:

- Sorting emails into “Inbox,” “Spam,” or “Promotions.”

- Categorizing news articles into topics like sports, finance, or politics.

- Detecting harmful or offensive content on social media.

2. Machine Translation

Tools like Google Translate and DeepL use NLP to convert text from one language to another. Modern systems go beyond literal word substitution, they consider grammar, idioms, and context. For instance, translating “break a leg” into Hindi or French preserves the meaning rather than converting the words directly.

Did you Know?

The roots of NLP go back to the 1950s! The very first experiment in machine translation took place in 1954, it was known as the Georgetown-IBM experiment where a computer translated more than 60 Russian sentences into English.

3. Question Answering

This task allows systems like ChatGPT, Gemini, or Cortana to respond to user questions accurately. For example, when you ask “Who is the Prime Minister of India?”, NLP identifies the question type, extracts the keyword “Prime Minister,” and retrieves the relevant answer.

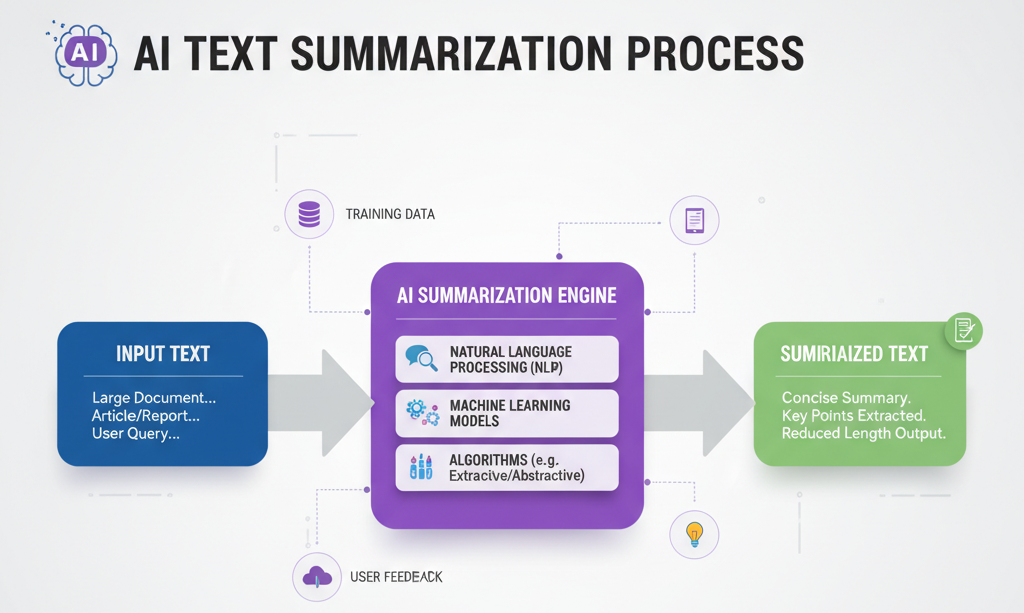

4. Text Summarization

Long documents can be condensed into concise summaries using NLP.

There are two types:

- Extractive summarization picks key sentences directly from the text.

- Abstractive summarization rephrases content like a human would.

News apps and research databases use this to save time and improve accessibility.

5. Speech Recognition and Generation

Voice-based systems like Siri, Alexa, and Google Assistant rely on NLP to convert spoken words into text (speech recognition) and respond using human-like voices (speech generation).

For example, when you say “Set an alarm for 7 a.m.,” NLP ensures the system interprets your intent correctly.

6. Sentiment and Emotion Detection

NLP tools analyze tweets, reviews, or chat messages to determine public opinion or emotional tone. Businesses use this for brand monitoring, while governments can study public sentiment during elections or crises.

7. Text Generation and Chatbots

Modern AI chatbots and assistants are capable of generating entire conversations, stories, or even code. Systems like ChatGPT use NLP to predict and generate coherent, contextually relevant text, making human–computer interaction more natural than ever.

8. Information Extraction

This involves pulling structured information from unstructured text. For instance, extracting key details like names, prices, and dates from a news report or research paper.

It’s vital for industries like law, journalism, and healthcare, where large volumes of text need to be analyzed efficiently.

Beyond Techniques and Tasks: Why It Matters

These techniques and tasks might sound technical, but their real power lies in their impact. NLP is not just about making computers smarter, it’s about making communication easier, faster, and more inclusive.

In India, for instance, multilingual NLP is helping build translation systems that bridge regional languages, allowing millions to access information in their native tongue. In healthcare, NLP assists doctors by analyzing patient notes and predicting medical outcomes.

The scope of Natural Language Processing is growing rapidly from personal assistants and customer support to research, education, and accessibility. Each breakthrough brings us closer to machines that truly understand not just what we say, but what we mean.

From breaking text into tokens to understanding emotions and generating fluent responses, Natural Language Processing is what gives AI its voice and its ability to listen. The combination of these techniques and tasks forms the backbone of every meaningful interaction between humans and machines.